Compare commits

185 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

9e2b55a249 | ||

|

|

0fbb94cd72 | ||

|

|

004b3f8574 | ||

|

|

7d1931dd17 | ||

|

|

8b7f41afa7 | ||

|

|

4bc0832865 | ||

|

|

a66c6d5f40 | ||

|

|

33b7cae24d | ||

|

|

47f5c88ef2 | ||

|

|

ffe382aee2 | ||

|

|

919f71ee78 | ||

|

|

404d4476ae | ||

|

|

f2b243cd5f | ||

|

|

c2fae41355 | ||

|

|

8fda2cde9e | ||

|

|

930380cdce | ||

|

|

5b788ffe15 | ||

|

|

521c2bdde5 | ||

|

|

eee73b1218 | ||

|

|

87d6da26c9 | ||

|

|

2029cd5cd2 | ||

|

|

36be752ee6 | ||

|

|

5b3586789f | ||

|

|

6ce670e643 | ||

|

|

dd70e8139c | ||

|

|

3ac0936d1a | ||

|

|

1477bacf6a | ||

|

|

d339a18901 | ||

|

|

f9460416d9 | ||

|

|

a9112cf3da | ||

|

|

c873b49700 | ||

|

|

3c553e37d8 | ||

|

|

0c47fbb1f7 | ||

|

|

476138ef53 | ||

|

|

385ca4f0fa | ||

|

|

46fd642789 | ||

|

|

e48249c7c9 | ||

|

|

9e8535e97e | ||

|

|

a794c63a5a | ||

|

|

f3610a46a2 | ||

|

|

20fd2cf6e3 | ||

|

|

7bf345d09d | ||

|

|

17e9560449 | ||

|

|

c02e6a565e | ||

|

|

7fbc9b9bde | ||

|

|

416e97d488 | ||

|

|

753060d9f3 | ||

|

|

972c53000c | ||

|

|

2b948a49a0 | ||

|

|

7999548738 | ||

|

|

d4d13b793f | ||

|

|

210b6f0d89 | ||

|

|

7f5894b274 | ||

|

|

2dc24ab945 | ||

|

|

2f153c9974 | ||

|

|

fa22647acd | ||

|

|

dd5d82fe7a | ||

|

|

98b179aeb5 | ||

|

|

e1f1c005a0 | ||

|

|

6e226c5a4f | ||

|

|

7440fa5a37 | ||

|

|

4fe204605a | ||

|

|

4446b42b82 | ||

|

|

4b6cd17d0a | ||

|

|

1a6e74271c | ||

|

|

6ba3719031 | ||

|

|

dd95e3df7e | ||

|

|

69fd7853c8 | ||

|

|

c01c478ffe | ||

|

|

f8be1da83a | ||

|

|

3a7625486e | ||

|

|

fdc3b6c573 | ||

|

|

76939ed51f | ||

|

|

b9cf761f4a | ||

|

|

4c515ba541 | ||

|

|

d7c3595bf1 | ||

|

|

1fbd6a0824 | ||

|

|

ccb59c7f02 | ||

|

|

04bef3e82a | ||

|

|

17105b98ed | ||

|

|

4bff1515a9 | ||

|

|

0a75893346 | ||

|

|

2ed92467f9 | ||

|

|

634ac122d9 | ||

|

|

44640b7e53 | ||

|

|

47e7b22a7e | ||

|

|

918928d4bb | ||

|

|

69fc172779 | ||

|

|

d84dabbe4d | ||

|

|

23114210c4 | ||

|

|

ea80e5a223 | ||

|

|

6087f31d41 | ||

|

|

30ee292a32 | ||

|

|

705a9319f5 | ||

|

|

c789d9d87c | ||

|

|

a7681b5505 | ||

|

|

9e74d8af0b | ||

|

|

b52061f849 | ||

|

|

01b875c283 | ||

|

|

4cc3b78321 | ||

|

|

6205db87e6 | ||

|

|

518633b153 | ||

|

|

988ee7b7e7 | ||

|

|

cdadde60ce | ||

|

|

4bb01d86d9 | ||

|

|

4cac43520f | ||

|

|

d6dddd16f1 | ||

|

|

c0da054635 | ||

|

|

2b4d94ca55 | ||

|

|

e8e564738a | ||

|

|

d48fbd8b62 | ||

|

|

c1f80f209e | ||

|

|

ed6b32c827 | ||

|

|

fc436fd352 | ||

|

|

ee6fdb1ca1 | ||

|

|

988db30355 | ||

|

|

ea98ee5e99 | ||

|

|

b8d1d43822 | ||

|

|

0d017c6d14 | ||

|

|

2825e9a003 | ||

|

|

6e9ddfcbf2 | ||

|

|

378689be39 | ||

|

|

31858fad12 | ||

|

|

60351d629d | ||

|

|

715a97159a | ||

|

|

b48ce28b35 | ||

|

|

7ab0448cd3 | ||

|

|

5f6642fa63 | ||

|

|

5a0d1ed408 | ||

|

|

131e8fb6be | ||

|

|

1c7fb8ef93 | ||

|

|

8c0ec3957f | ||

|

|

72063a15d9 | ||

|

|

0d1b15aafc | ||

|

|

ca10369bdc | ||

|

|

42af75d8d2 | ||

|

|

a02871dd28 | ||

|

|

e65a8bc648 | ||

|

|

b373b6a34f | ||

|

|

6d6a0255e2 | ||

|

|

003d6a3d5f | ||

|

|

77a2c60fe5 | ||

|

|

ac3bd699ee | ||

|

|

596498c81e | ||

|

|

c95f764c77 | ||

|

|

5c5be05843 | ||

|

|

3fb26ec49e | ||

|

|

3f767d22e9 | ||

|

|

7f3fb0d82d | ||

|

|

d56c132459 | ||

|

|

acdce762c9 | ||

|

|

bd557d9652 | ||

|

|

3363d13fa0 | ||

|

|

52ba44e260 | ||

|

|

f06c2dae23 | ||

|

|

55a636f4d1 | ||

|

|

0fc8730272 | ||

|

|

61a2bc466e | ||

|

|

62b1923bf4 | ||

|

|

8e25376a12 | ||

|

|

a9ab5d45a4 | ||

|

|

ce2a2f0b93 | ||

|

|

9cb6b0b665 | ||

|

|

dfc21fc0e9 | ||

|

|

19b089e6c6 | ||

|

|

02aa2734e0 | ||

|

|

66f9fd7231 | ||

|

|

1b125cb704 | ||

|

|

29f5d85c7b | ||

|

|

c192a1f31c | ||

|

|

3b20daf807 | ||

|

|

760c00e8ae | ||

|

|

6d8d3788a6 | ||

|

|

98e23e0033 | ||

|

|

4d7aff3458 | ||

|

|

33e47696dc | ||

|

|

c0f8825f83 | ||

|

|

7c26956cd4 | ||

|

|

52f02cd5d0 | ||

|

|

0df6b20147 | ||

|

|

253a2dda7d | ||

|

|

d762a85130 | ||

|

|

a765e8cf2e | ||

|

|

3e7fd1140c | ||

|

|

a56631510d |

2

.github/ISSUE_TEMPLATE/bug_report.md

vendored

2

.github/ISSUE_TEMPLATE/bug_report.md

vendored

@@ -6,7 +6,7 @@ labels: bug

|

|||||||

assignees: ''

|

assignees: ''

|

||||||

|

|

||||||

---

|

---

|

||||||

|

<!--Please be aware that GNOME Code of Conduct applies to Alpaca, https://conduct.gnome.org/-->

|

||||||

**Describe the bug**

|

**Describe the bug**

|

||||||

A clear and concise description of what the bug is.

|

A clear and concise description of what the bug is.

|

||||||

|

|

||||||

|

|||||||

2

.github/ISSUE_TEMPLATE/feature_request.md

vendored

2

.github/ISSUE_TEMPLATE/feature_request.md

vendored

@@ -6,7 +6,7 @@ labels: enhancement

|

|||||||

assignees: ''

|

assignees: ''

|

||||||

|

|

||||||

---

|

---

|

||||||

|

<!--Please be aware that GNOME Code of Conduct applies to Alpaca, https://conduct.gnome.org/-->

|

||||||

**Is your feature request related to a problem? Please describe.**

|

**Is your feature request related to a problem? Please describe.**

|

||||||

A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

|

A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

|

||||||

|

|

||||||

|

|||||||

18

.github/workflows/flatpak-builder.yml

vendored

Normal file

18

.github/workflows/flatpak-builder.yml

vendored

Normal file

@@ -0,0 +1,18 @@

|

|||||||

|

# .github/workflows/flatpak-build.yml

|

||||||

|

on:

|

||||||

|

workflow_dispatch:

|

||||||

|

name: Flatpak Build

|

||||||

|

jobs:

|

||||||

|

flatpak:

|

||||||

|

name: "Flatpak"

|

||||||

|

runs-on: ubuntu-latest

|

||||||

|

container:

|

||||||

|

image: bilelmoussaoui/flatpak-github-actions:gnome-46

|

||||||

|

options: --privileged

|

||||||

|

steps:

|

||||||

|

- uses: actions/checkout@v4

|

||||||

|

- uses: flatpak/flatpak-github-actions/flatpak-builder@v6

|

||||||

|

with:

|

||||||

|

bundle: Alpaca.flatpak

|

||||||

|

manifest-path: com.jeffser.Alpaca.json

|

||||||

|

cache-key: flatpak-builder-${{ github.sha }}

|

||||||

24

.github/workflows/pylint.yml

vendored

Normal file

24

.github/workflows/pylint.yml

vendored

Normal file

@@ -0,0 +1,24 @@

|

|||||||

|

name: Pylint

|

||||||

|

|

||||||

|

on:

|

||||||

|

workflow_dispatch:

|

||||||

|

|

||||||

|

jobs:

|

||||||

|

build:

|

||||||

|

runs-on: ubuntu-latest

|

||||||

|

strategy:

|

||||||

|

matrix:

|

||||||

|

python-version: ["3.11"]

|

||||||

|

steps:

|

||||||

|

- uses: actions/checkout@v4

|

||||||

|

- name: Set up Python ${{ matrix.python-version }}

|

||||||

|

uses: actions/setup-python@v3

|

||||||

|

with:

|

||||||

|

python-version: ${{ matrix.python-version }}

|

||||||

|

- name: Install dependencies

|

||||||

|

run: |

|

||||||

|

python -m pip install --upgrade pip

|

||||||

|

pip install pylint

|

||||||

|

- name: Analysing the code with pylint

|

||||||

|

run: |

|

||||||

|

pylint --rcfile=.pylintrc $(git ls-files '*.py' | grep -v 'src/available_models_descriptions.py')

|

||||||

14

.pylintrc

Normal file

14

.pylintrc

Normal file

@@ -0,0 +1,14 @@

|

|||||||

|

[MASTER]

|

||||||

|

|

||||||

|

[MESSAGES CONTROL]

|

||||||

|

disable=undefined-variable, line-too-long, missing-function-docstring, consider-using-f-string, import-error

|

||||||

|

|

||||||

|

[FORMAT]

|

||||||

|

max-line-length=200

|

||||||

|

|

||||||

|

# Reasons for removing some checks:

|

||||||

|

# undefined-variable: _() is used by the translator on build time but it is not defined on the scripts

|

||||||

|

# line-too-long: I... I'm too lazy to make the lines shorter, maybe later

|

||||||

|

# missing-function-docstring I'm not adding a docstring to all the functions, most are self explanatory

|

||||||

|

# consider-using-f-string I can't use f-string because of the translator

|

||||||

|

# import-error The linter doesn't have access to all the libraries that the project itself does

|

||||||

34

Alpaca.doap

Normal file

34

Alpaca.doap

Normal file

@@ -0,0 +1,34 @@

|

|||||||

|

<Project xmlns:rdf="http://www.w3.org/1999/02/22-rdf-syntax-ns#"

|

||||||

|

xmlns:rdfs="http://www.w3.org/2000/01/rdf-schema#"

|

||||||

|

xmlns:foaf="http://xmlns.com/foaf/0.1/"

|

||||||

|

xmlns:gnome="http://api.gnome.org/doap-extensions#"

|

||||||

|

xmlns="http://usefulinc.com/ns/doap#">

|

||||||

|

|

||||||

|

<name xml:lang="en">Alpaca</name>

|

||||||

|

<shortdesc xml:lang="en">An Ollama client made with GTK4 and Adwaita</shortdesc>

|

||||||

|

<homepage rdf:resource="https://jeffser.com/alpaca" />

|

||||||

|

<bug-database rdf:resource="https://github.com/Jeffser/Alpaca/issues"/>

|

||||||

|

<programming-language>Python</programming-language>

|

||||||

|

|

||||||

|

<platform>GTK 4</platform>

|

||||||

|

<platform>Libadwaita</platform>

|

||||||

|

|

||||||

|

<maintainer>

|

||||||

|

<foaf:Person>

|

||||||

|

<foaf:name>Jeffry Samuel</foaf:name>

|

||||||

|

<foaf:mbox rdf:resource="mailto:jeffrysamuer@gmail.com"/>

|

||||||

|

<foaf:account>

|

||||||

|

<foaf:OnlineAccount>

|

||||||

|

<foaf:accountServiceHomepage rdf:resource="https://github.com"/>

|

||||||

|

<foaf:accountName>jeffser</foaf:accountName>

|

||||||

|

</foaf:OnlineAccount>

|

||||||

|

</foaf:account>

|

||||||

|

<foaf:account>

|

||||||

|

<foaf:OnlineAccount>

|

||||||

|

<foaf:accountServiceHomepage rdf:resource="https://gitlab.gnome.org"/>

|

||||||

|

<foaf:accountName>jeffser</foaf:accountName>

|

||||||

|

</foaf:OnlineAccount>

|

||||||

|

</foaf:account>

|

||||||

|

</foaf:Person>

|

||||||

|

</maintainer>

|

||||||

|

</Project>

|

||||||

61

README.md

61

README.md

@@ -11,7 +11,11 @@ Alpaca is an [Ollama](https://github.com/ollama/ollama) client where you can man

|

|||||||

> [!WARNING]

|

> [!WARNING]

|

||||||

> This project is not affiliated at all with Ollama, I'm not responsible for any damages to your device or software caused by running code given by any AI models.

|

> This project is not affiliated at all with Ollama, I'm not responsible for any damages to your device or software caused by running code given by any AI models.

|

||||||

|

|

||||||

|

> [!IMPORTANT]

|

||||||

|

> Please be aware that [GNOME Code of Conduct](https://conduct.gnome.org) applies to Alpaca before interacting with this repository.

|

||||||

|

|

||||||

## Features!

|

## Features!

|

||||||

|

|

||||||

- Talk to multiple models in the same conversation

|

- Talk to multiple models in the same conversation

|

||||||

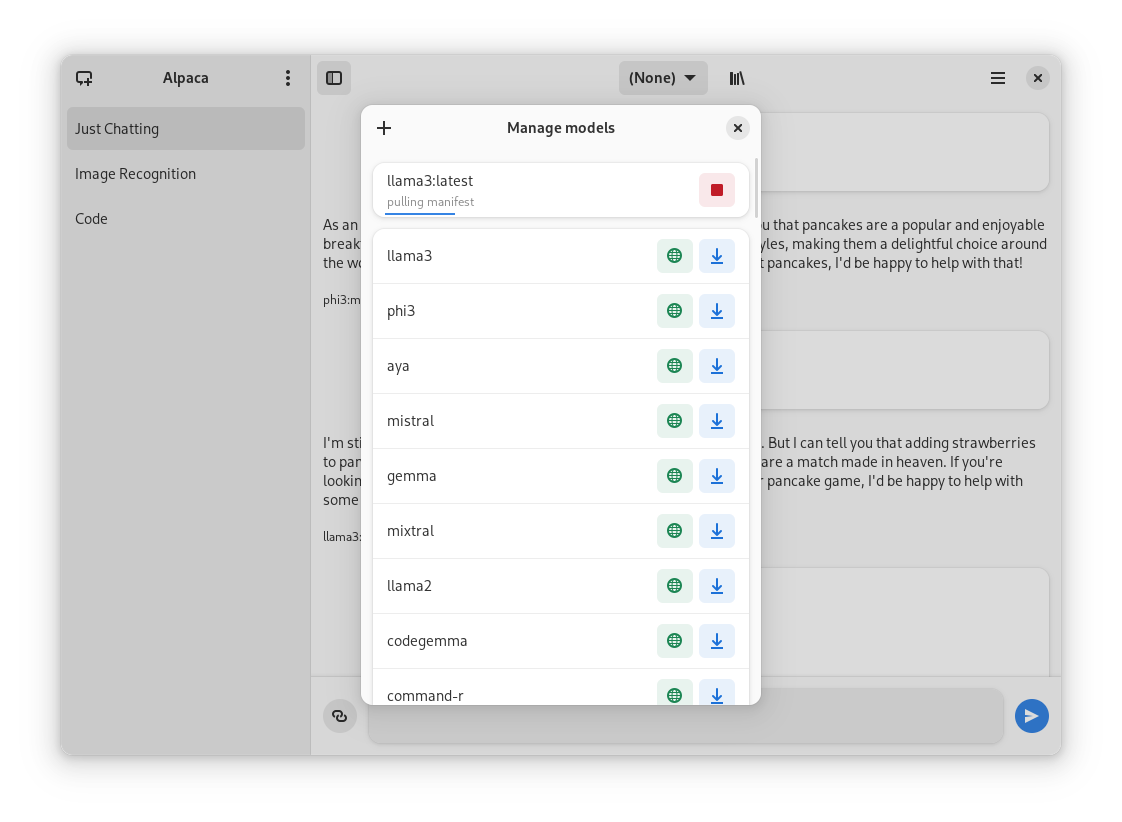

- Pull and delete models from the app

|

- Pull and delete models from the app

|

||||||

- Image recognition

|

- Image recognition

|

||||||

@@ -21,47 +25,38 @@ Alpaca is an [Ollama](https://github.com/ollama/ollama) client where you can man

|

|||||||

- Notifications

|

- Notifications

|

||||||

- Import / Export chats

|

- Import / Export chats

|

||||||

- Delete / Edit messages

|

- Delete / Edit messages

|

||||||

|

- Regenerate messages

|

||||||

- YouTube recognition (Ask questions about a YouTube video using the transcript)

|

- YouTube recognition (Ask questions about a YouTube video using the transcript)

|

||||||

- Website recognition (Ask questions about a certain question by parsing the url)

|

- Website recognition (Ask questions about a certain website by parsing the url)

|

||||||

|

|

||||||

## Screenies

|

## Screenies

|

||||||

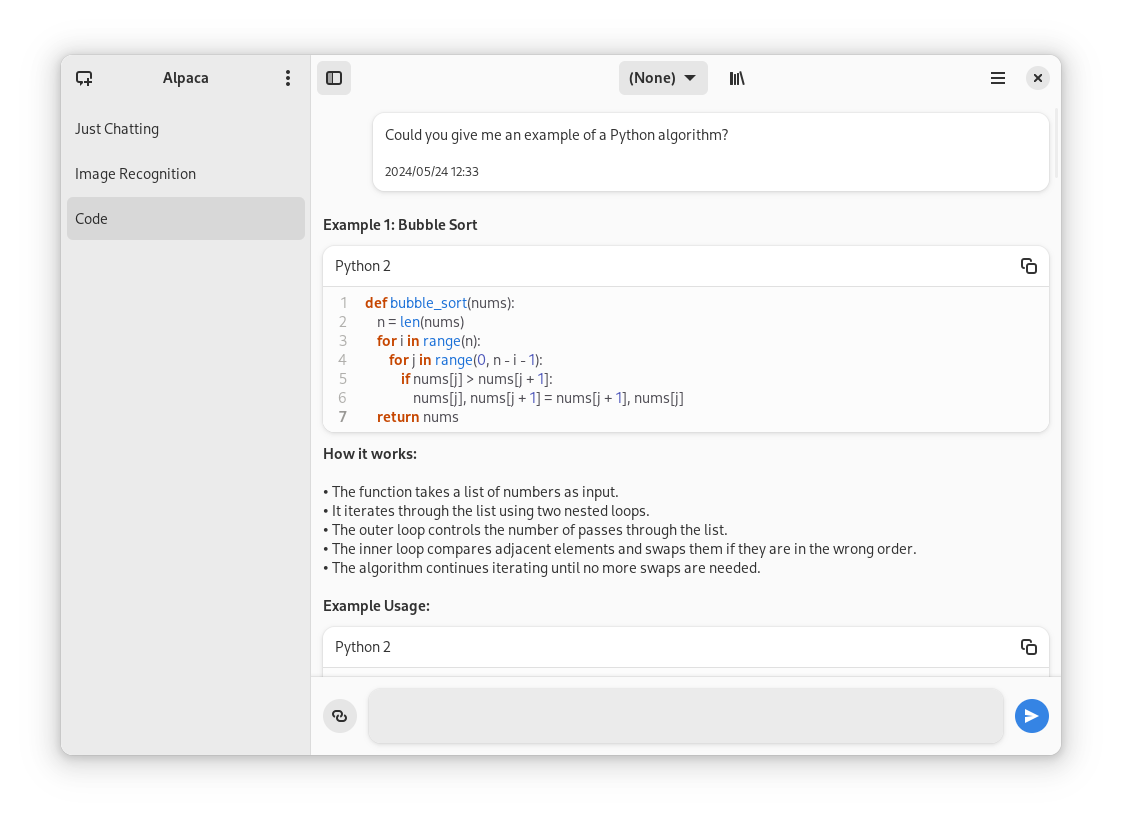

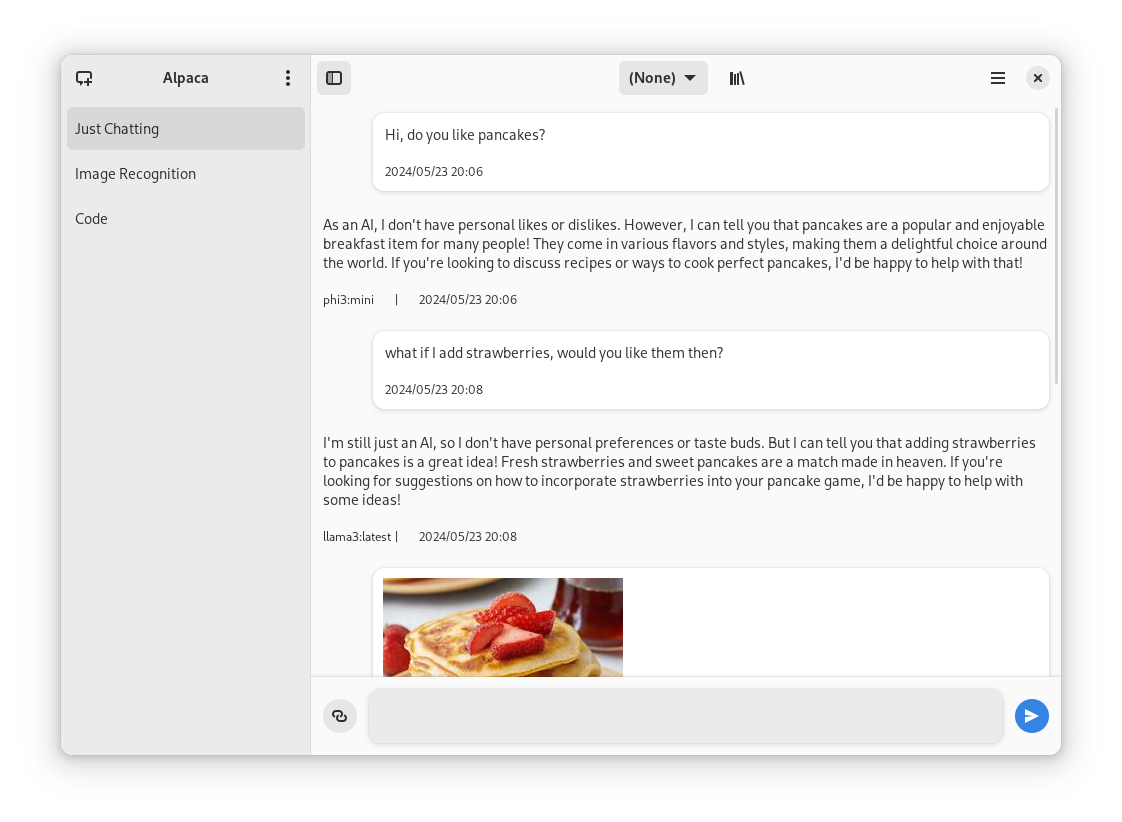

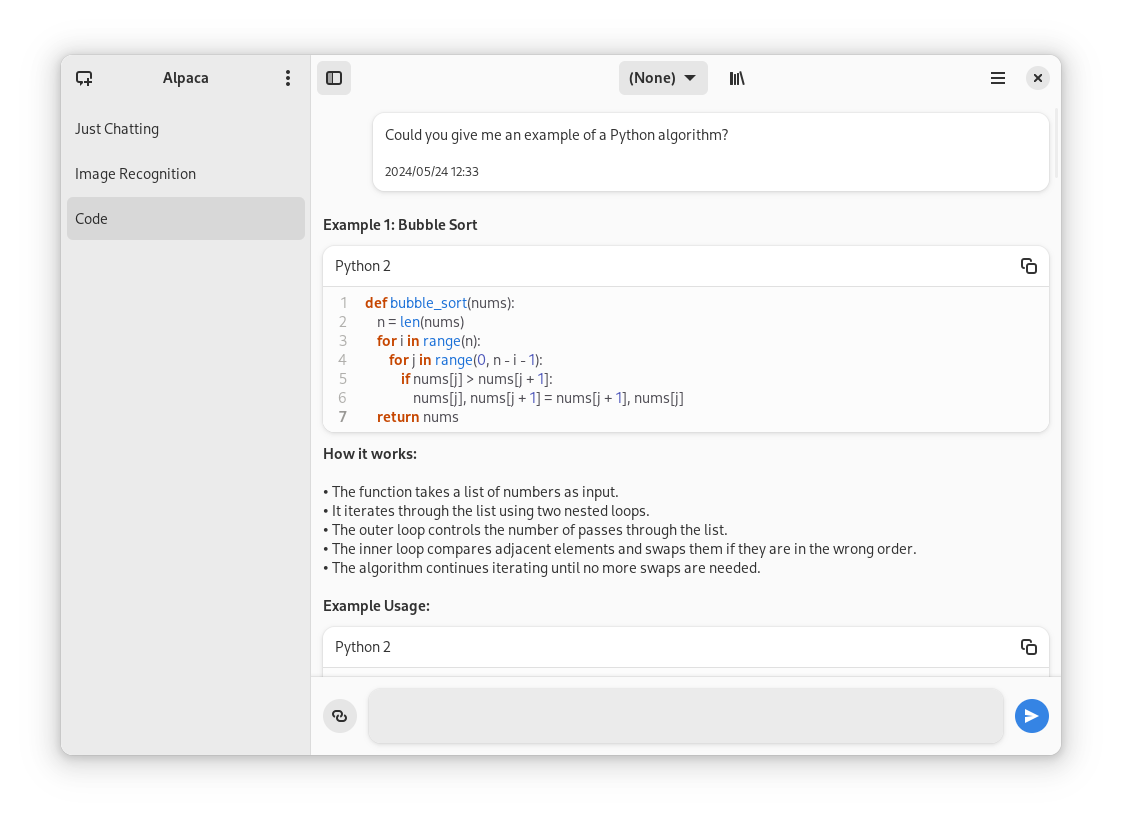

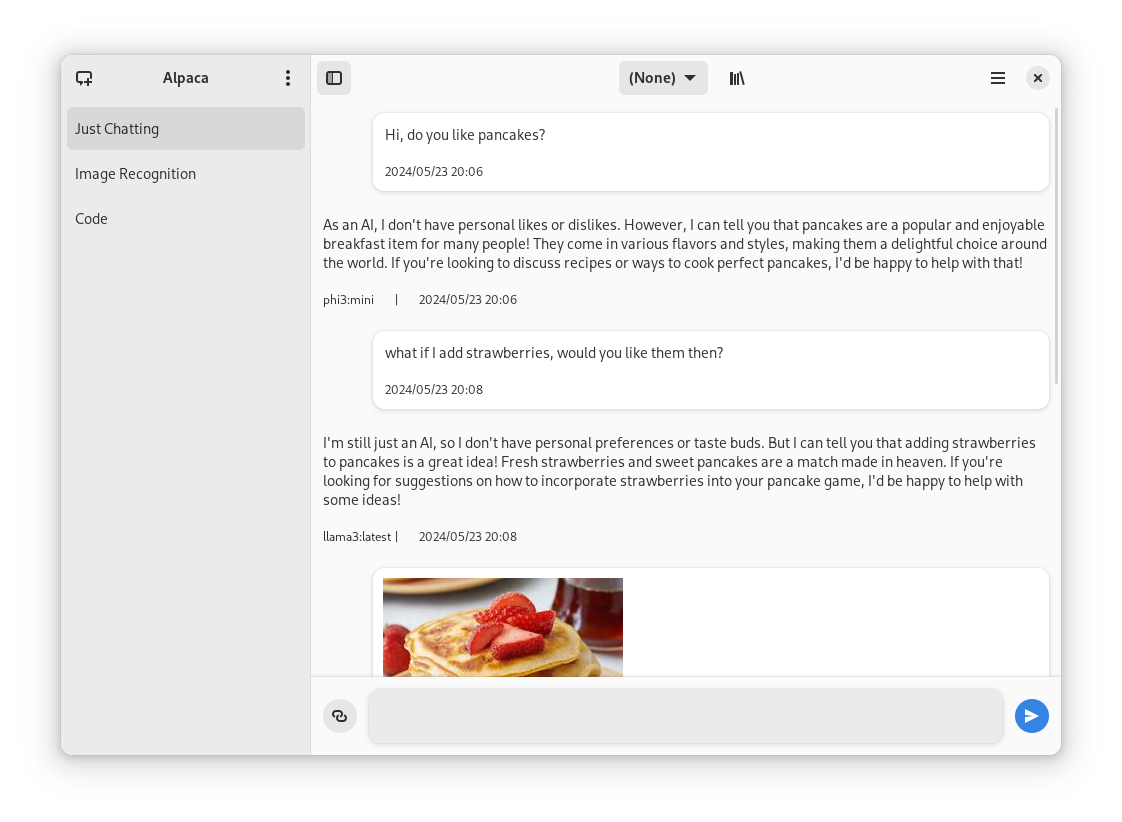

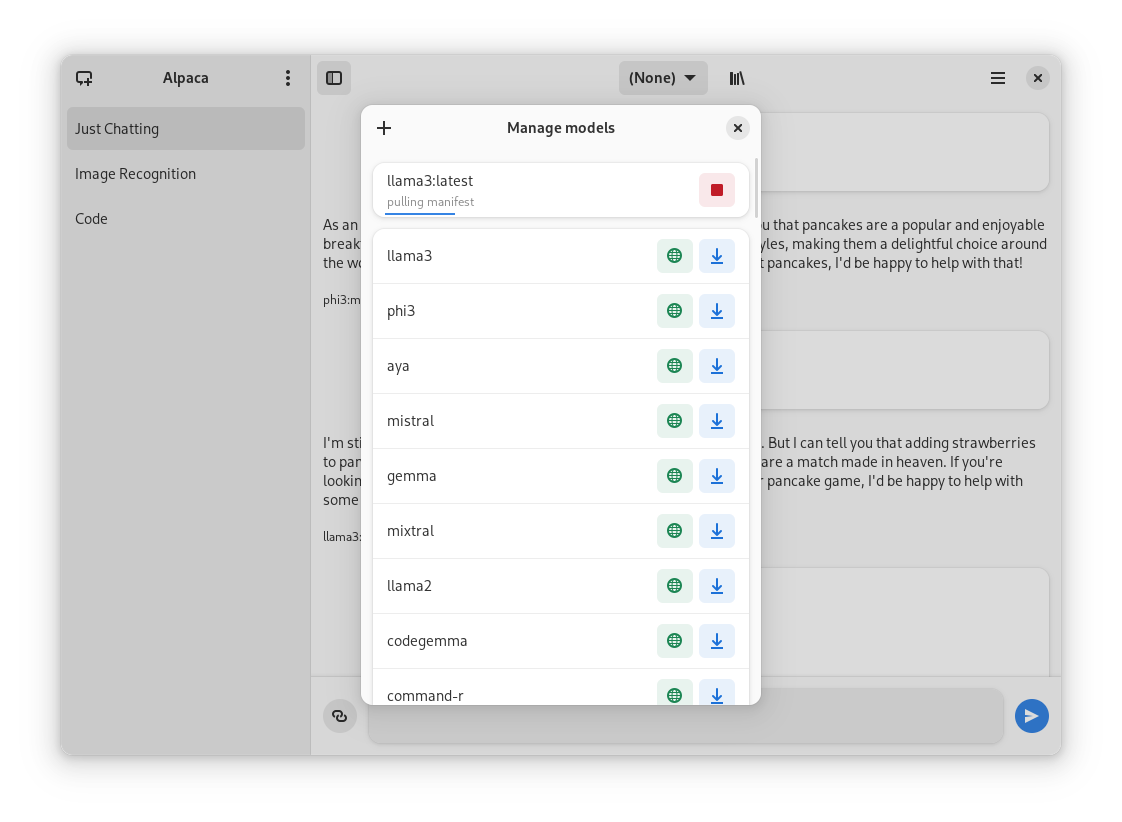

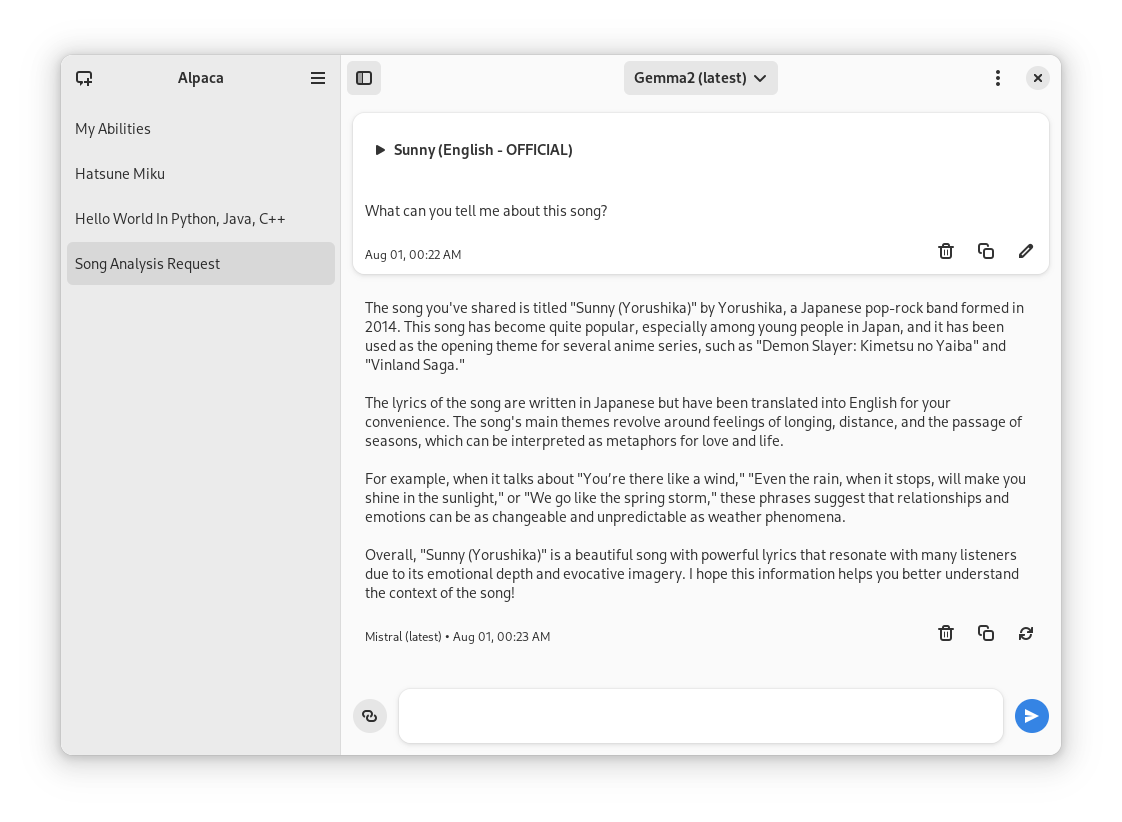

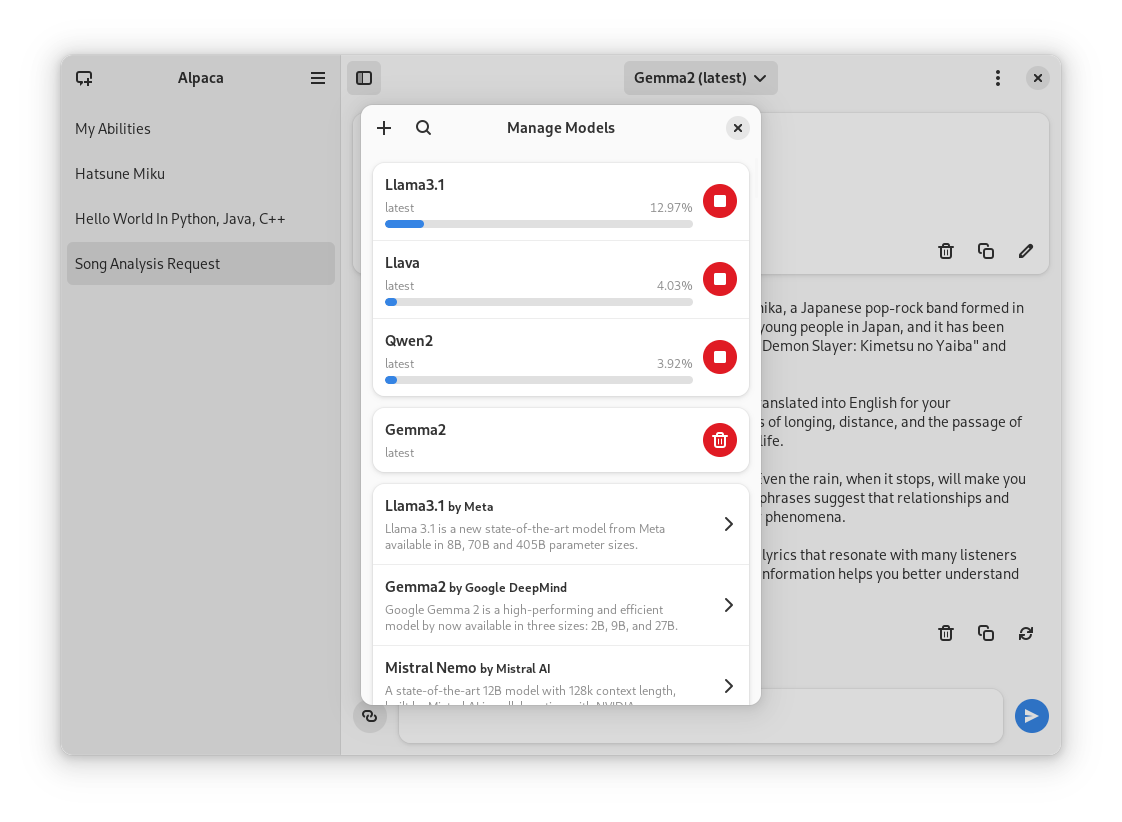

Chatting with a model | Image recognition | Code highlighting

|

|

||||||

:--------------------:|:-----------------:|:----------------------:

|

|

||||||

|  |

|

|

||||||

|

|

||||||

## Preview

|

Normal conversation | Image recognition | Code highlighting | YouTube transcription | Model management

|

||||||

1. Clone repo using Gnome Builder

|

:------------------:|:-----------------:|:-----------------:|:---------------------:|:----------------:

|

||||||

2. Press the `run` button

|

|  |  |  |

|

||||||

|

|

||||||

## Instalation

|

## Translators

|

||||||

1. Go to the `releases` page

|

|

||||||

2. Download the latest flatpak package

|

|

||||||

3. Open it

|

|

||||||

|

|

||||||

## Ollama session tips

|

Language | Contributors

|

||||||

|

:----------------------|:-----------

|

||||||

### Change the port of the integrated Ollama instance

|

🇷🇺 Russian | [Alex K](https://github.com/alexkdeveloper)

|

||||||

Go to `~/.var/app/com.jeffser.Alpaca/config/server.json` and change the `"local_port"` value, by default it is `11435`.

|

🇪🇸 Spanish | [Jeffry Samuel](https://github.com/jeffser)

|

||||||

|

🇫🇷 French | [Louis Chauvet-Villaret](https://github.com/loulou64490) , [Théo FORTIN](https://github.com/topiga)

|

||||||

### Backup all the chats

|

🇧🇷 Brazilian Portuguese | [Daimar Stein](https://github.com/not-a-dev-stein)

|

||||||

The chat data is located in `~/.var/app/com.jeffser.Alpaca/data/chats` you can copy that directory wherever you want to.

|

🇳🇴 Norwegian | [CounterFlow64](https://github.com/CounterFlow64)

|

||||||

|

🇮🇳 Bengali | [Aritra Saha](https://github.com/olumolu)

|

||||||

### Force showing the welcome dialog

|

🇨🇳 Simplified Chinese | [Yuehao Sui](https://github.com/8ar10der) , [Aleksana](https://github.com/Aleksanaa)

|

||||||

To do that you just need to delete the file `~/.var/app/com.jeffser.Alpaca/config/server.json`, this won't affect your saved chats or models.

|

🇮🇳 Hindi | [Aritra Saha](https://github.com/olumolu)

|

||||||

|

|

||||||

### Add/Change environment variables for Ollama

|

|

||||||

You can change anything except `$HOME` and `$OLLAMA_HOST`, to do this go to `~/.var/app/com.jeffser.Alpaca/config/server.json` and change `ollama_overrides` accordingly, some overrides are available to change on the GUI.

|

|

||||||

|

|

||||||

---

|

---

|

||||||

|

|

||||||

## Thanks

|

## Thanks

|

||||||

- [not-a-dev-stein](https://github.com/not-a-dev-stein) for their help with requesting a new icon, bug reports and the translation to Brazilian Portuguese

|

|

||||||

- [TylerLaBree](https://github.com/TylerLaBree) for their requests and ideas

|

|

||||||

- [Alexkdeveloper](https://github.com/alexkdeveloper) for their help translating the app to Russian

|

|

||||||

- [Imbev](https://github.com/imbev) for their reports and suggestions

|

|

||||||

- [Nokse](https://github.com/Nokse22) for their contributions to the UI

|

|

||||||

- [Louis Chauvet-Villaret](https://github.com/loulou64490) for their suggestions and help translating the app to French

|

|

||||||

- [CounterFlow64](https://github.com/CounterFlow64) for their help translating the app to Norwegian

|

|

||||||

|

|

||||||

## About forks

|

- [not-a-dev-stein](https://github.com/not-a-dev-stein) for their help with requesting a new icon and bug reports

|

||||||

If you want to fork this... I mean, I think it would be better if you start from scratch, my code isn't well documented at all, but if you really want to, please give me some credit, that's all I ask for... And maybe a donation (joke)

|

- [TylerLaBree](https://github.com/TylerLaBree) for their requests and ideas

|

||||||

|

- [Imbev](https://github.com/imbev) for their reports and suggestions

|

||||||

|

- [Nokse](https://github.com/Nokse22) for their contributions to the UI and table rendering

|

||||||

|

- [Louis Chauvet-Villaret](https://github.com/loulou64490) for their suggestions

|

||||||

|

- [Aleksana](https://github.com/Aleksanaa) for her help with better handling of directories

|

||||||

|

- Sponsors for giving me enough money to be able to take a ride to my campus every time I need to <3

|

||||||

|

- Everyone that has shared kind words of encouragement!

|

||||||

|

|||||||

@@ -122,16 +122,16 @@

|

|||||||

"sources": [

|

"sources": [

|

||||||

{

|

{

|

||||||

"type": "file",

|

"type": "file",

|

||||||

"url": "https://github.com/ollama/ollama/releases/download/v0.2.8/ollama-linux-amd64",

|

"url": "https://github.com/ollama/ollama/releases/download/v0.3.3/ollama-linux-amd64",

|

||||||

"sha256": "7641b21e9d0822ba44e494f5ed3d3796d9e9fcdf4dbb66064f8c34c865bbec0b",

|

"sha256": "2b2a4ee4c86fa5b09503e95616bd1b3ee95238b1b3bf12488b9c27c66b84061a",

|

||||||

"only-arches": [

|

"only-arches": [

|

||||||

"x86_64"

|

"x86_64"

|

||||||

]

|

]

|

||||||

},

|

},

|

||||||

{

|

{

|

||||||

"type": "file",

|

"type": "file",

|

||||||

"url": "https://github.com/ollama/ollama/releases/download/v0.2.8/ollama-linux-arm64",

|

"url": "https://github.com/ollama/ollama/releases/download/v0.3.3/ollama-linux-arm64",

|

||||||

"sha256": "8ccaea237c3ef2a34d0cc00d8a89ffb1179d5c49211b6cbdf80d8d88e3f0add6",

|

"sha256": "28fddbea0c161bc539fd08a3dc78d51413cfe8da97386cb39420f4f30667e22c",

|

||||||

"only-arches": [

|

"only-arches": [

|

||||||

"aarch64"

|

"aarch64"

|

||||||

]

|

]

|

||||||

@@ -145,7 +145,7 @@

|

|||||||

"sources" : [

|

"sources" : [

|

||||||

{

|

{

|

||||||

"type" : "git",

|

"type" : "git",

|

||||||

"url" : "file:///home/tentri/Documents/Alpaca",

|

"url": "https://github.com/Jeffser/Alpaca.git",

|

||||||

"branch" : "main"

|

"branch" : "main"

|

||||||

}

|

}

|

||||||

]

|

]

|

||||||

|

|||||||

@@ -78,6 +78,140 @@

|

|||||||

<url type="contribute">https://github.com/Jeffser/Alpaca/discussions/154</url>

|

<url type="contribute">https://github.com/Jeffser/Alpaca/discussions/154</url>

|

||||||

<url type="vcs-browser">https://github.com/Jeffser/Alpaca</url>

|

<url type="vcs-browser">https://github.com/Jeffser/Alpaca</url>

|

||||||

<releases>

|

<releases>

|

||||||

|

<release version="1.1.0" date="2024-08-10">

|

||||||

|

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/1.1.0</url>

|

||||||

|

<description>

|

||||||

|

<p>New</p>

|

||||||

|

<ul>

|

||||||

|

<li>Model manager opens faster</li>

|

||||||

|

<li>Delete chat option in secondary menu</li>

|

||||||

|

<li>New model selector popup</li>

|

||||||

|

<li>Standard shortcuts</li>

|

||||||

|

<li>Model manager is navigable with keyboard</li>

|

||||||

|

<li>Changed sidebar collapsing behavior</li>

|

||||||

|

<li>Focus indicators on messages</li>

|

||||||

|

<li>Welcome screen</li>

|

||||||

|

<li>Give message entry focus at launch</li>

|

||||||

|

<li>Generally better code</li>

|

||||||

|

</ul>

|

||||||

|

<p>Fixes</p>

|

||||||

|

<ul>

|

||||||

|

<li>Better width for dialogs</li>

|

||||||

|

<li>Better compatibility with screen readers</li>

|

||||||

|

<li>Fixed message regenerator</li>

|

||||||

|

<li>Removed 'Featured models' from welcome dialog</li>

|

||||||

|

<li>Added default buttons to dialogs</li>

|

||||||

|

<li>Fixed import / export of chats</li>

|

||||||

|

<li>Changed Python2 title to Python on code blocks</li>

|

||||||

|

<li>Prevent regeneration of title when the user changed it to a custom title</li>

|

||||||

|

<li>Show date on stopped messages</li>

|

||||||

|

<li>Fix clear chat error</li>

|

||||||

|

</ul>

|

||||||

|

</description>

|

||||||

|

</release>

|

||||||

|

<release version="1.0.6" date="2024-08-04">

|

||||||

|

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/1.0.6</url>

|

||||||

|

<description>

|

||||||

|

<p>New</p>

|

||||||

|

<ul>

|

||||||

|

<li>Changed shortcuts to standards</li>

|

||||||

|

<li>Moved 'Manage Models' button to primary menu</li>

|

||||||

|

<li>Stable support for GGUF model files</li>

|

||||||

|

<li>General optimizations</li>

|

||||||

|

</ul>

|

||||||

|

<p>Fixes</p>

|

||||||

|

<ul>

|

||||||

|

<li>Better handling of enter key (important for Japanese input)</li>

|

||||||

|

<li>Removed sponsor dialog</li>

|

||||||

|

<li>Added sponsor link in about dialog</li>

|

||||||

|

<li>Changed window and elements dimensions</li>

|

||||||

|

<li>Selected model changes when entering model manager</li>

|

||||||

|

<li>Better image tooltips</li>

|

||||||

|

<li>GGUF Support</li>

|

||||||

|

</ul>

|

||||||

|

</description>

|

||||||

|

</release>

|

||||||

|

<release version="1.0.5" date="2024-08-02">

|

||||||

|

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/1.0.5</url>

|

||||||

|

<description>

|

||||||

|

<p>New</p>

|

||||||

|

<ul>

|

||||||

|

<li>Regenerate any response, even if they are incomplete</li>

|

||||||

|

<li>Support for pulling models by name:tag</li>

|

||||||

|

<li>Stable support for GGUF model files</li>

|

||||||

|

<li>Restored sidebar toggle button</li>

|

||||||

|

</ul>

|

||||||

|

<p>Fixes</p>

|

||||||

|

<ul>

|

||||||

|

<li>Reverted back to standard styles</li>

|

||||||

|

<li>Fixed generated titles having "'S" for some reason</li>

|

||||||

|

<li>Changed min width for model dropdown</li>

|

||||||

|

<li>Changed message entry shadow</li>

|

||||||

|

<li>The last model used is now restored when the user changes chat</li>

|

||||||

|

<li>Better check for message finishing</li>

|

||||||

|

</ul>

|

||||||

|

</description>

|

||||||

|

</release>

|

||||||

|

<release version="1.0.4" date="2024-08-01">

|

||||||

|

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/1.0.4</url>

|

||||||

|

<description>

|

||||||

|

<p>New</p>

|

||||||

|

<ul>

|

||||||

|

<li>Added table rendering (Thanks Nokse)</li>

|

||||||

|

</ul>

|

||||||

|

<p>Fixes</p>

|

||||||

|

<ul>

|

||||||

|

<li>Made support dialog more common</li>

|

||||||

|

<li>Dialog title on tag chooser when downloading models didn't display properly</li>

|

||||||

|

<li>Prevent chat generation from generating a title with multiple lines</li>

|

||||||

|

</ul>

|

||||||

|

</description>

|

||||||

|

</release>

|

||||||

|

<release version="1.0.3" date="2024-08-01">

|

||||||

|

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/1.0.3</url>

|

||||||

|

<description>

|

||||||

|

<p>New</p>

|

||||||

|

<ul>

|

||||||

|

<li>Bearer Token entry on connection error dialog</li>

|

||||||

|

<li>Small appearance changes</li>

|

||||||

|

<li>Compatibility with code blocks without explicit language</li>

|

||||||

|

<li>Rare, optional and dismissible support dialog</li>

|

||||||

|

</ul>

|

||||||

|

<p>Fixes</p>

|

||||||

|

<ul>

|

||||||

|

<li>Date format for Simplified Chinese translation</li>

|

||||||

|

<li>Bug with unsupported localizations</li>

|

||||||

|

<li>Min height being too large to be used on mobile</li>

|

||||||

|

<li>Remote connection checker bug</li>

|

||||||

|

</ul>

|

||||||

|

</description>

|

||||||

|

</release>

|

||||||

|

<release version="1.0.2" date="2024-07-29">

|

||||||

|

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/1.0.2</url>

|

||||||

|

<description>

|

||||||

|

<p>Fixes</p>

|

||||||

|

<ul>

|

||||||

|

<li>Models with capital letters on their tag don't work</li>

|

||||||

|

<li>Ollama fails to launch on some systems</li>

|

||||||

|

<li>YouTube transcripts are not being saved in the right TMP directory</li>

|

||||||

|

</ul>

|

||||||

|

<p>New</p>

|

||||||

|

<ul>

|

||||||

|

<li>Debug messages are now shown on the 'About Alpaca' dialog</li>

|

||||||

|

<li>Updated Ollama to v0.3.0 (new models)</li>

|

||||||

|

</ul>

|

||||||

|

</description>

|

||||||

|

</release>

|

||||||

|

<release version="1.0.1" date="2024-07-23">

|

||||||

|

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/1.0.1</url>

|

||||||

|

<description>

|

||||||

|

<p>Fixes</p>

|

||||||

|

<ul>

|

||||||

|

<li>Models with '-' in their names didn't work properly, this is now fixed</li>

|

||||||

|

<li>Better connection check for Ollama</li>

|

||||||

|

</ul>

|

||||||

|

</description>

|

||||||

|

</release>

|

||||||

<release version="1.0.0" date="2024-07-22">

|

<release version="1.0.0" date="2024-07-22">

|

||||||

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/1.0.0</url>

|

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/1.0.0</url>

|

||||||

<description>

|

<description>

|

||||||

|

|||||||

@@ -1,5 +1,5 @@

|

|||||||

project('Alpaca', 'c',

|

project('Alpaca', 'c',

|

||||||

version: '1.0.0',

|

version: '1.1.0',

|

||||||

meson_version: '>= 0.62.0',

|

meson_version: '>= 0.62.0',

|

||||||

default_options: [ 'warning_level=2', 'werror=false', ],

|

default_options: [ 'warning_level=2', 'werror=false', ],

|

||||||

)

|

)

|

||||||

|

|||||||

@@ -4,4 +4,5 @@ pt_BR

|

|||||||

fr

|

fr

|

||||||

nb_NO

|

nb_NO

|

||||||

bn

|

bn

|

||||||

zh_CN

|

zh_CN

|

||||||

|

hi

|

||||||

|

|||||||

1724

po/alpaca.pot

1724

po/alpaca.pot

File diff suppressed because it is too large

Load Diff

1858

po/nb_NO.po

1858

po/nb_NO.po

File diff suppressed because it is too large

Load Diff

1761

po/pt_BR.po

1761

po/pt_BR.po

File diff suppressed because it is too large

Load Diff

1824

po/zh_CN.po

1824

po/zh_CN.po

File diff suppressed because it is too large

Load Diff

@@ -28,6 +28,8 @@

|

|||||||

<file alias="icons/scalable/status/edit-find-symbolic.svg">icons/edit-find-symbolic.svg</file>

|

<file alias="icons/scalable/status/edit-find-symbolic.svg">icons/edit-find-symbolic.svg</file>

|

||||||

<file alias="icons/scalable/status/edit-symbolic.svg">icons/edit-symbolic.svg</file>

|

<file alias="icons/scalable/status/edit-symbolic.svg">icons/edit-symbolic.svg</file>

|

||||||

<file alias="icons/scalable/status/image-missing-symbolic.svg">icons/image-missing-symbolic.svg</file>

|

<file alias="icons/scalable/status/image-missing-symbolic.svg">icons/image-missing-symbolic.svg</file>

|

||||||

|

<file alias="icons/scalable/status/update-symbolic.svg">icons/update-symbolic.svg</file>

|

||||||

|

<file alias="icons/scalable/status/down-symbolic.svg">icons/down-symbolic.svg</file>

|

||||||

<file preprocess="xml-stripblanks">window.ui</file>

|

<file preprocess="xml-stripblanks">window.ui</file>

|

||||||

<file preprocess="xml-stripblanks">gtk/help-overlay.ui</file>

|

<file preprocess="xml-stripblanks">gtk/help-overlay.ui</file>

|

||||||

</gresource>

|

</gresource>

|

||||||

|

|||||||

File diff suppressed because it is too large

Load Diff

@@ -1,16 +1,18 @@

|

|||||||

descriptions = {

|

descriptions = {

|

||||||

|

'llama3.1': _("Llama 3.1 is a new state-of-the-art model from Meta available in 8B, 70B and 405B parameter sizes."),

|

||||||

'gemma2': _("Google Gemma 2 is now available in 2 sizes, 9B and 27B."),

|

'gemma2': _("Google Gemma 2 is now available in 2 sizes, 9B and 27B."),

|

||||||

'llama3': _("Meta Llama 3: The most capable openly available LLM to date"),

|

'mistral-nemo': _("A state-of-the-art 12B model with 128k context length, built by Mistral AI in collaboration with NVIDIA."),

|

||||||

|

'mistral-large': _("Mistral Large 2 is Mistral's new flagship model that is significantly more capable in code generation, mathematics, and reasoning with 128k context window and support for dozens of languages."),

|

||||||

'qwen2': _("Qwen2 is a new series of large language models from Alibaba group"),

|

'qwen2': _("Qwen2 is a new series of large language models from Alibaba group"),

|

||||||

'deepseek-coder-v2': _("An open-source Mixture-of-Experts code language model that achieves performance comparable to GPT4-Turbo in code-specific tasks."),

|

'deepseek-coder-v2': _("An open-source Mixture-of-Experts code language model that achieves performance comparable to GPT4-Turbo in code-specific tasks."),

|

||||||

'phi3': _("Phi-3 is a family of lightweight 3B (Mini) and 14B (Medium) state-of-the-art open models by Microsoft."),

|

'phi3': _("Phi-3 is a family of lightweight 3B (Mini) and 14B (Medium) state-of-the-art open models by Microsoft."),

|

||||||

'aya': _("Aya 23, released by Cohere, is a new family of state-of-the-art, multilingual models that support 23 languages."),

|

|

||||||

'mistral': _("The 7B model released by Mistral AI, updated to version 0.3."),

|

'mistral': _("The 7B model released by Mistral AI, updated to version 0.3."),

|

||||||

'mixtral': _("A set of Mixture of Experts (MoE) model with open weights by Mistral AI in 8x7b and 8x22b parameter sizes."),

|

'mixtral': _("A set of Mixture of Experts (MoE) model with open weights by Mistral AI in 8x7b and 8x22b parameter sizes."),

|

||||||

'codegemma': _("CodeGemma is a collection of powerful, lightweight models that can perform a variety of coding tasks like fill-in-the-middle code completion, code generation, natural language understanding, mathematical reasoning, and instruction following."),

|

'codegemma': _("CodeGemma is a collection of powerful, lightweight models that can perform a variety of coding tasks like fill-in-the-middle code completion, code generation, natural language understanding, mathematical reasoning, and instruction following."),

|

||||||

'command-r': _("Command R is a Large Language Model optimized for conversational interaction and long context tasks."),

|

'command-r': _("Command R is a Large Language Model optimized for conversational interaction and long context tasks."),

|

||||||

'command-r-plus': _("Command R+ is a powerful, scalable large language model purpose-built to excel at real-world enterprise use cases."),

|

'command-r-plus': _("Command R+ is a powerful, scalable large language model purpose-built to excel at real-world enterprise use cases."),

|

||||||

'llava': _("🌋 LLaVA is a novel end-to-end trained large multimodal model that combines a vision encoder and Vicuna for general-purpose visual and language understanding. Updated to version 1.6."),

|

'llava': _("🌋 LLaVA is a novel end-to-end trained large multimodal model that combines a vision encoder and Vicuna for general-purpose visual and language understanding. Updated to version 1.6."),

|

||||||

|

'llama3': _("Meta Llama 3: The most capable openly available LLM to date"),

|

||||||

'gemma': _("Gemma is a family of lightweight, state-of-the-art open models built by Google DeepMind. Updated to version 1.1"),

|

'gemma': _("Gemma is a family of lightweight, state-of-the-art open models built by Google DeepMind. Updated to version 1.1"),

|

||||||

'qwen': _("Qwen 1.5 is a series of large language models by Alibaba Cloud spanning from 0.5B to 110B parameters"),

|

'qwen': _("Qwen 1.5 is a series of large language models by Alibaba Cloud spanning from 0.5B to 110B parameters"),

|

||||||

'llama2': _("Llama 2 is a collection of foundation language models ranging from 7B to 70B parameters."),

|

'llama2': _("Llama 2 is a collection of foundation language models ranging from 7B to 70B parameters."),

|

||||||

@@ -18,49 +20,50 @@ descriptions = {

|

|||||||

'dolphin-mixtral': _("Uncensored, 8x7b and 8x22b fine-tuned models based on the Mixtral mixture of experts models that excels at coding tasks. Created by Eric Hartford."),

|

'dolphin-mixtral': _("Uncensored, 8x7b and 8x22b fine-tuned models based on the Mixtral mixture of experts models that excels at coding tasks. Created by Eric Hartford."),

|

||||||

'nomic-embed-text': _("A high-performing open embedding model with a large token context window."),

|

'nomic-embed-text': _("A high-performing open embedding model with a large token context window."),

|

||||||

'llama2-uncensored': _("Uncensored Llama 2 model by George Sung and Jarrad Hope."),

|

'llama2-uncensored': _("Uncensored Llama 2 model by George Sung and Jarrad Hope."),

|

||||||

'deepseek-coder': _("DeepSeek Coder is a capable coding model trained on two trillion code and natural language tokens."),

|

|

||||||

'phi': _("Phi-2: a 2.7B language model by Microsoft Research that demonstrates outstanding reasoning and language understanding capabilities."),

|

'phi': _("Phi-2: a 2.7B language model by Microsoft Research that demonstrates outstanding reasoning and language understanding capabilities."),

|

||||||

|

'deepseek-coder': _("DeepSeek Coder is a capable coding model trained on two trillion code and natural language tokens."),

|

||||||

'dolphin-mistral': _("The uncensored Dolphin model based on Mistral that excels at coding tasks. Updated to version 2.8."),

|

'dolphin-mistral': _("The uncensored Dolphin model based on Mistral that excels at coding tasks. Updated to version 2.8."),

|

||||||

'orca-mini': _("A general-purpose model ranging from 3 billion parameters to 70 billion, suitable for entry-level hardware."),

|

'orca-mini': _("A general-purpose model ranging from 3 billion parameters to 70 billion, suitable for entry-level hardware."),

|

||||||

'dolphin-llama3': _("Dolphin 2.9 is a new model with 8B and 70B sizes by Eric Hartford based on Llama 3 that has a variety of instruction, conversational, and coding skills."),

|

'dolphin-llama3': _("Dolphin 2.9 is a new model with 8B and 70B sizes by Eric Hartford based on Llama 3 that has a variety of instruction, conversational, and coding skills."),

|

||||||

'mxbai-embed-large': _("State-of-the-art large embedding model from mixedbread.ai"),

|

'mxbai-embed-large': _("State-of-the-art large embedding model from mixedbread.ai"),

|

||||||

'mistral-openorca': _("Mistral OpenOrca is a 7 billion parameter model, fine-tuned on top of the Mistral 7B model using the OpenOrca dataset."),

|

|

||||||

'starcoder2': _("StarCoder2 is the next generation of transparently trained open code LLMs that comes in three sizes: 3B, 7B and 15B parameters."),

|

'starcoder2': _("StarCoder2 is the next generation of transparently trained open code LLMs that comes in three sizes: 3B, 7B and 15B parameters."),

|

||||||

'zephyr': _("Zephyr is a series of fine-tuned versions of the Mistral and Mixtral models that are trained to act as helpful assistants."),

|

'mistral-openorca': _("Mistral OpenOrca is a 7 billion parameter model, fine-tuned on top of the Mistral 7B model using the OpenOrca dataset."),

|

||||||

'yi': _("Yi 1.5 is a high-performing, bilingual language model."),

|

'yi': _("Yi 1.5 is a high-performing, bilingual language model."),

|

||||||

|

'zephyr': _("Zephyr is a series of fine-tuned versions of the Mistral and Mixtral models that are trained to act as helpful assistants."),

|

||||||

'llama2-chinese': _("Llama 2 based model fine tuned to improve Chinese dialogue ability."),

|

'llama2-chinese': _("Llama 2 based model fine tuned to improve Chinese dialogue ability."),

|

||||||

'llava-llama3': _("A LLaVA model fine-tuned from Llama 3 Instruct with better scores in several benchmarks."),

|

'llava-llama3': _("A LLaVA model fine-tuned from Llama 3 Instruct with better scores in several benchmarks."),

|

||||||

'vicuna': _("General use chat model based on Llama and Llama 2 with 2K to 16K context sizes."),

|

'vicuna': _("General use chat model based on Llama and Llama 2 with 2K to 16K context sizes."),

|

||||||

'nous-hermes2': _("The powerful family of models by Nous Research that excels at scientific discussion and coding tasks."),

|

'nous-hermes2': _("The powerful family of models by Nous Research that excels at scientific discussion and coding tasks."),

|

||||||

'wizard-vicuna-uncensored': _("Wizard Vicuna Uncensored is a 7B, 13B, and 30B parameter model based on Llama 2 uncensored by Eric Hartford."),

|

|

||||||

'tinyllama': _("The TinyLlama project is an open endeavor to train a compact 1.1B Llama model on 3 trillion tokens."),

|

'tinyllama': _("The TinyLlama project is an open endeavor to train a compact 1.1B Llama model on 3 trillion tokens."),

|

||||||

|

'wizard-vicuna-uncensored': _("Wizard Vicuna Uncensored is a 7B, 13B, and 30B parameter model based on Llama 2 uncensored by Eric Hartford."),

|

||||||

'codestral': _("Codestral is Mistral AI’s first-ever code model designed for code generation tasks."),

|

'codestral': _("Codestral is Mistral AI’s first-ever code model designed for code generation tasks."),

|

||||||

'starcoder': _("StarCoder is a code generation model trained on 80+ programming languages."),

|

'starcoder': _("StarCoder is a code generation model trained on 80+ programming languages."),

|

||||||

'wizardlm2': _("State of the art large language model from Microsoft AI with improved performance on complex chat, multilingual, reasoning and agent use cases."),

|

'wizardlm2': _("State of the art large language model from Microsoft AI with improved performance on complex chat, multilingual, reasoning and agent use cases."),

|

||||||

'openchat': _("A family of open-source models trained on a wide variety of data, surpassing ChatGPT on various benchmarks. Updated to version 3.5-0106."),

|

'openchat': _("A family of open-source models trained on a wide variety of data, surpassing ChatGPT on various benchmarks. Updated to version 3.5-0106."),

|

||||||

|

'aya': _("Aya 23, released by Cohere, is a new family of state-of-the-art, multilingual models that support 23 languages."),

|

||||||

'tinydolphin': _("An experimental 1.1B parameter model trained on the new Dolphin 2.8 dataset by Eric Hartford and based on TinyLlama."),

|

'tinydolphin': _("An experimental 1.1B parameter model trained on the new Dolphin 2.8 dataset by Eric Hartford and based on TinyLlama."),

|

||||||

'openhermes': _("OpenHermes 2.5 is a 7B model fine-tuned by Teknium on Mistral with fully open datasets."),

|

'openhermes': _("OpenHermes 2.5 is a 7B model fine-tuned by Teknium on Mistral with fully open datasets."),

|

||||||

'wizardcoder': _("State-of-the-art code generation model"),

|

'wizardcoder': _("State-of-the-art code generation model"),

|

||||||

'stable-code': _("Stable Code 3B is a coding model with instruct and code completion variants on par with models such as Code Llama 7B that are 2.5x larger."),

|

'stable-code': _("Stable Code 3B is a coding model with instruct and code completion variants on par with models such as Code Llama 7B that are 2.5x larger."),

|

||||||

'codeqwen': _("CodeQwen1.5 is a large language model pretrained on a large amount of code data."),

|

'codeqwen': _("CodeQwen1.5 is a large language model pretrained on a large amount of code data."),

|

||||||

'neural-chat': _("A fine-tuned model based on Mistral with good coverage of domain and language."),

|

|

||||||

'wizard-math': _("Model focused on math and logic problems"),

|

'wizard-math': _("Model focused on math and logic problems"),

|

||||||

|

'neural-chat': _("A fine-tuned model based on Mistral with good coverage of domain and language."),

|

||||||

'stablelm2': _("Stable LM 2 is a state-of-the-art 1.6B and 12B parameter language model trained on multilingual data in English, Spanish, German, Italian, French, Portuguese, and Dutch."),

|

'stablelm2': _("Stable LM 2 is a state-of-the-art 1.6B and 12B parameter language model trained on multilingual data in English, Spanish, German, Italian, French, Portuguese, and Dutch."),

|

||||||

'all-minilm': _("Embedding models on very large sentence level datasets."),

|

|

||||||

'granite-code': _("A family of open foundation models by IBM for Code Intelligence"),

|

'granite-code': _("A family of open foundation models by IBM for Code Intelligence"),

|

||||||

|

'all-minilm': _("Embedding models on very large sentence level datasets."),

|

||||||

'phind-codellama': _("Code generation model based on Code Llama."),

|

'phind-codellama': _("Code generation model based on Code Llama."),

|

||||||

'dolphincoder': _("A 7B and 15B uncensored variant of the Dolphin model family that excels at coding, based on StarCoder2."),

|

'dolphincoder': _("A 7B and 15B uncensored variant of the Dolphin model family that excels at coding, based on StarCoder2."),

|

||||||

'nous-hermes': _("General use models based on Llama and Llama 2 from Nous Research."),

|

'nous-hermes': _("General use models based on Llama and Llama 2 from Nous Research."),

|

||||||

'sqlcoder': _("SQLCoder is a code completion model fined-tuned on StarCoder for SQL generation tasks"),

|

'sqlcoder': _("SQLCoder is a code completion model fined-tuned on StarCoder for SQL generation tasks"),

|

||||||

'llama3-gradient': _("This model extends LLama-3 8B's context length from 8k to over 1m tokens."),

|

'llama3-gradient': _("This model extends LLama-3 8B's context length from 8k to over 1m tokens."),

|

||||||

'starling-lm': _("Starling is a large language model trained by reinforcement learning from AI feedback focused on improving chatbot helpfulness."),

|

'starling-lm': _("Starling is a large language model trained by reinforcement learning from AI feedback focused on improving chatbot helpfulness."),

|

||||||

'deepseek-llm': _("An advanced language model crafted with 2 trillion bilingual tokens."),

|

|

||||||

'yarn-llama2': _("An extension of Llama 2 that supports a context of up to 128k tokens."),

|

'yarn-llama2': _("An extension of Llama 2 that supports a context of up to 128k tokens."),

|

||||||

'xwinlm': _("Conversational model based on Llama 2 that performs competitively on various benchmarks."),

|

'xwinlm': _("Conversational model based on Llama 2 that performs competitively on various benchmarks."),

|

||||||

|

'deepseek-llm': _("An advanced language model crafted with 2 trillion bilingual tokens."),

|

||||||

'llama3-chatqa': _("A model from NVIDIA based on Llama 3 that excels at conversational question answering (QA) and retrieval-augmented generation (RAG)."),

|

'llama3-chatqa': _("A model from NVIDIA based on Llama 3 that excels at conversational question answering (QA) and retrieval-augmented generation (RAG)."),

|

||||||

'orca2': _("Orca 2 is built by Microsoft research, and are a fine-tuned version of Meta's Llama 2 models. The model is designed to excel particularly in reasoning."),

|

'orca2': _("Orca 2 is built by Microsoft research, and are a fine-tuned version of Meta's Llama 2 models. The model is designed to excel particularly in reasoning."),

|

||||||

'solar': _("A compact, yet powerful 10.7B large language model designed for single-turn conversation."),

|

|

||||||

'wizardlm': _("General use model based on Llama 2."),

|

'wizardlm': _("General use model based on Llama 2."),

|

||||||

|

'solar': _("A compact, yet powerful 10.7B large language model designed for single-turn conversation."),

|

||||||

'samantha-mistral': _("A companion assistant trained in philosophy, psychology, and personal relationships. Based on Mistral."),

|

'samantha-mistral': _("A companion assistant trained in philosophy, psychology, and personal relationships. Based on Mistral."),

|

||||||

'dolphin-phi': _("2.7B uncensored Dolphin model by Eric Hartford, based on the Phi language model by Microsoft Research."),

|

'dolphin-phi': _("2.7B uncensored Dolphin model by Eric Hartford, based on the Phi language model by Microsoft Research."),

|

||||||

'stable-beluga': _("Llama 2 based model fine tuned on an Orca-style dataset. Originally called Free Willy."),

|

'stable-beluga': _("Llama 2 based model fine tuned on an Orca-style dataset. Originally called Free Willy."),

|

||||||

@@ -68,22 +71,23 @@ descriptions = {

|

|||||||

'bakllava': _("BakLLaVA is a multimodal model consisting of the Mistral 7B base model augmented with the LLaVA architecture."),

|

'bakllava': _("BakLLaVA is a multimodal model consisting of the Mistral 7B base model augmented with the LLaVA architecture."),

|

||||||

'wizardlm-uncensored': _("Uncensored version of Wizard LM model"),

|

'wizardlm-uncensored': _("Uncensored version of Wizard LM model"),

|

||||||

'snowflake-arctic-embed': _("A suite of text embedding models by Snowflake, optimized for performance."),

|

'snowflake-arctic-embed': _("A suite of text embedding models by Snowflake, optimized for performance."),

|

||||||

|

'deepseek-v2': _("A strong, economical, and efficient Mixture-of-Experts language model."),

|

||||||

'medllama2': _("Fine-tuned Llama 2 model to answer medical questions based on an open source medical dataset."),

|

'medllama2': _("Fine-tuned Llama 2 model to answer medical questions based on an open source medical dataset."),

|

||||||

'yarn-mistral': _("An extension of Mistral to support context windows of 64K or 128K."),

|

'yarn-mistral': _("An extension of Mistral to support context windows of 64K or 128K."),

|

||||||

'nous-hermes2-mixtral': _("The Nous Hermes 2 model from Nous Research, now trained over Mixtral."),

|

|

||||||

'llama-pro': _("An expansion of Llama 2 that specializes in integrating both general language understanding and domain-specific knowledge, particularly in programming and mathematics."),

|

'llama-pro': _("An expansion of Llama 2 that specializes in integrating both general language understanding and domain-specific knowledge, particularly in programming and mathematics."),

|

||||||

'deepseek-v2': _("A strong, economical, and efficient Mixture-of-Experts language model."),

|

'nous-hermes2-mixtral': _("The Nous Hermes 2 model from Nous Research, now trained over Mixtral."),

|

||||||

'meditron': _("Open-source medical large language model adapted from Llama 2 to the medical domain."),

|

'meditron': _("Open-source medical large language model adapted from Llama 2 to the medical domain."),

|

||||||

'codeup': _("Great code generation model based on Llama2."),

|

'codeup': _("Great code generation model based on Llama2."),

|

||||||

'nexusraven': _("Nexus Raven is a 13B instruction tuned model for function calling tasks."),

|

'nexusraven': _("Nexus Raven is a 13B instruction tuned model for function calling tasks."),

|

||||||

'everythinglm': _("Uncensored Llama2 based model with support for a 16K context window."),

|

'everythinglm': _("Uncensored Llama2 based model with support for a 16K context window."),

|

||||||

'llava-phi3': _("A new small LLaVA model fine-tuned from Phi 3 Mini."),

|

'llava-phi3': _("A new small LLaVA model fine-tuned from Phi 3 Mini."),

|

||||||

|

'codegeex4': _("A versatile model for AI software development scenarios, including code completion."),

|

||||||

|

'glm4': _("A strong multi-lingual general language model with competitive performance to Llama 3."),

|

||||||

'magicoder': _("🎩 Magicoder is a family of 7B parameter models trained on 75K synthetic instruction data using OSS-Instruct, a novel approach to enlightening LLMs with open-source code snippets."),

|

'magicoder': _("🎩 Magicoder is a family of 7B parameter models trained on 75K synthetic instruction data using OSS-Instruct, a novel approach to enlightening LLMs with open-source code snippets."),

|

||||||

'stablelm-zephyr': _("A lightweight chat model allowing accurate, and responsive output without requiring high-end hardware."),

|

'stablelm-zephyr': _("A lightweight chat model allowing accurate, and responsive output without requiring high-end hardware."),

|

||||||

'codebooga': _("A high-performing code instruct model created by merging two existing code models."),

|

'codebooga': _("A high-performing code instruct model created by merging two existing code models."),

|

||||||

'mistrallite': _("MistralLite is a fine-tuned model based on Mistral with enhanced capabilities of processing long contexts."),

|

'mistrallite': _("MistralLite is a fine-tuned model based on Mistral with enhanced capabilities of processing long contexts."),

|

||||||

'glm4': _("A strong multi-lingual general language model with competitive performance to Llama 3."),

|

'wizard-vicuna': _("Wizard Vicuna is a 13B parameter model based on Llama 2 trained by MelodysDreamj."),

|

||||||

'wizard-vicuna': _("A strong multi-lingual general language model with competitive performance to Llama 3."),

|

|

||||||

'duckdb-nsql': _("7B parameter text-to-SQL model made by MotherDuck and Numbers Station."),

|

'duckdb-nsql': _("7B parameter text-to-SQL model made by MotherDuck and Numbers Station."),

|

||||||

'megadolphin': _("MegaDolphin-2.2-120b is a transformation of Dolphin-2.2-70b created by interleaving the model with itself."),

|

'megadolphin': _("MegaDolphin-2.2-120b is a transformation of Dolphin-2.2-70b created by interleaving the model with itself."),

|

||||||

'goliath': _("A language model created by combining two fine-tuned Llama 2 70B models into one."),

|

'goliath': _("A language model created by combining two fine-tuned Llama 2 70B models into one."),

|

||||||

@@ -92,12 +96,10 @@ descriptions = {

|

|||||||

'falcon2': _("Falcon2 is an 11B parameters causal decoder-only model built by TII and trained over 5T tokens."),

|

'falcon2': _("Falcon2 is an 11B parameters causal decoder-only model built by TII and trained over 5T tokens."),

|

||||||

'notus': _("A 7B chat model fine-tuned with high-quality data and based on Zephyr."),

|

'notus': _("A 7B chat model fine-tuned with high-quality data and based on Zephyr."),

|

||||||

'dbrx': _("DBRX is an open, general-purpose LLM created by Databricks."),

|

'dbrx': _("DBRX is an open, general-purpose LLM created by Databricks."),

|

||||||

'codegeex4': _("A versatile model for AI software development scenarios, including code completion."),

|

|

||||||

'alfred': _("A robust conversational model designed to be used for both chat and instruct use cases."),

|

|

||||||

'internlm2': _("InternLM2.5 is a 7B parameter model tailored for practical scenarios with outstanding reasoning capability."),

|

'internlm2': _("InternLM2.5 is a 7B parameter model tailored for practical scenarios with outstanding reasoning capability."),

|

||||||

|

'alfred': _("A robust conversational model designed to be used for both chat and instruct use cases."),

|

||||||

'llama3-groq-tool-use': _("A series of models from Groq that represent a significant advancement in open-source AI capabilities for tool use/function calling."),

|

'llama3-groq-tool-use': _("A series of models from Groq that represent a significant advancement in open-source AI capabilities for tool use/function calling."),

|

||||||

'mathstral': _("MathΣtral: a 7B model designed for math reasoning and scientific discovery by Mistral AI."),

|

'mathstral': _("MathΣtral: a 7B model designed for math reasoning and scientific discovery by Mistral AI."),

|

||||||

'mistral-nemo': _("A state-of-the-art 12B model with 128k context length, built by Mistral AI in collaboration with NVIDIA."),

|

|

||||||

'firefunction-v2': _("An open weights function calling model based on Llama 3, competitive with GPT-4o function calling capabilities."),

|

'firefunction-v2': _("An open weights function calling model based on Llama 3, competitive with GPT-4o function calling capabilities."),

|

||||||

'nuextract': _("A 3.8B model fine-tuned on a private high-quality synthetic dataset for information extraction, based on Phi-3."),

|

'nuextract': _("A 3.8B model fine-tuned on a private high-quality synthetic dataset for information extraction, based on Phi-3."),

|

||||||

}

|

}

|

||||||

@@ -1,15 +1,19 @@

|

|||||||

# connection_handler.py

|

# connection_handler.py

|

||||||

import json, requests

|

"""

|

||||||

|

Handles requests to remote and integrated instances of Ollama

|

||||||

|

"""

|

||||||

|

import json

|

||||||

|

import requests

|

||||||

#OK=200 response.status_code

|

#OK=200 response.status_code

|

||||||

url = None

|

URL = None

|

||||||

bearer_token = None

|

BEARER_TOKEN = None

|

||||||

|

|

||||||

def get_headers(include_json:bool) -> dict:

|

def get_headers(include_json:bool) -> dict:

|

||||||

headers = {}

|

headers = {}

|

||||||

if include_json:

|

if include_json:

|

||||||

headers["Content-Type"] = "application/json"

|

headers["Content-Type"] = "application/json"

|

||||||

if bearer_token:

|

if BEARER_TOKEN:

|

||||||

headers["Authorization"] = "Bearer {}".format(bearer_token)

|

headers["Authorization"] = "Bearer {}".format(BEARER_TOKEN)

|

||||||

return headers if len(headers.keys()) > 0 else None

|

return headers if len(headers.keys()) > 0 else None

|

||||||

|

|

||||||

def simple_get(connection_url:str) -> dict:

|

def simple_get(connection_url:str) -> dict:

|

||||||

|

|||||||

124

src/dialogs.py

124

src/dialogs.py

@@ -1,11 +1,15 @@

|

|||||||

# dialogs.py

|

# dialogs.py

|

||||||

|

"""

|

||||||

from gi.repository import Adw, Gtk, Gdk, GLib, GtkSource, Gio, GdkPixbuf

|

Handles UI dialogs

|

||||||

|

"""

|

||||||

import os

|

import os

|

||||||

|

import logging

|

||||||

from pytube import YouTube

|

from pytube import YouTube

|

||||||

from html2text import html2text

|

from html2text import html2text

|

||||||

|

from gi.repository import Adw, Gtk

|

||||||

from . import connection_handler

|

from . import connection_handler

|

||||||

|

|

||||||

|

logger = logging.getLogger(__name__)

|

||||||

# CLEAR CHAT | WORKS

|

# CLEAR CHAT | WORKS

|

||||||

|

|

||||||

def clear_chat_response(self, dialog, task):

|

def clear_chat_response(self, dialog, task):

|

||||||

@@ -24,6 +28,7 @@ def clear_chat(self):

|

|||||||

dialog.add_response("cancel", _("Cancel"))

|

dialog.add_response("cancel", _("Cancel"))

|

||||||

dialog.add_response("clear", _("Clear"))

|

dialog.add_response("clear", _("Clear"))

|

||||||

dialog.set_response_appearance("clear", Adw.ResponseAppearance.DESTRUCTIVE)

|

dialog.set_response_appearance("clear", Adw.ResponseAppearance.DESTRUCTIVE)

|

||||||

|

dialog.set_default_response("clear")

|

||||||

dialog.choose(

|

dialog.choose(

|

||||||

parent = self,

|

parent = self,

|

||||||

cancellable = None,

|

cancellable = None,

|

||||||

@@ -45,6 +50,7 @@ def delete_chat(self, chat_name):

|

|||||||

dialog.add_response("cancel", _("Cancel"))

|

dialog.add_response("cancel", _("Cancel"))

|

||||||

dialog.add_response("delete", _("Delete"))

|

dialog.add_response("delete", _("Delete"))

|

||||||

dialog.set_response_appearance("delete", Adw.ResponseAppearance.DESTRUCTIVE)

|

dialog.set_response_appearance("delete", Adw.ResponseAppearance.DESTRUCTIVE)

|

||||||

|

dialog.set_default_response("delete")

|

||||||

dialog.choose(

|

dialog.choose(

|

||||||

parent = self,

|

parent = self,

|

||||||

cancellable = None,

|

cancellable = None,

|

||||||

@@ -54,9 +60,11 @@ def delete_chat(self, chat_name):

|

|||||||

# RENAME CHAT | WORKS

|

# RENAME CHAT | WORKS

|

||||||

|

|

||||||

def rename_chat_response(self, dialog, task, old_chat_name, entry, label_element):

|

def rename_chat_response(self, dialog, task, old_chat_name, entry, label_element):

|

||||||

if not entry: return

|

if not entry:

|

||||||

|

return

|

||||||

new_chat_name = entry.get_text()

|

new_chat_name = entry.get_text()

|

||||||

if old_chat_name == new_chat_name: return

|

if old_chat_name == new_chat_name:

|

||||||

|

return

|

||||||

if new_chat_name and (task is None or dialog.choose_finish(task) == "rename"):

|

if new_chat_name and (task is None or dialog.choose_finish(task) == "rename"):

|

||||||

self.rename_chat(old_chat_name, new_chat_name, label_element)

|

self.rename_chat(old_chat_name, new_chat_name, label_element)

|

||||||

|

|

||||||

@@ -68,10 +76,10 @@ def rename_chat(self, chat_name, label_element):

|

|||||||

extra_child=entry,

|

extra_child=entry,

|

||||||

close_response="cancel"

|

close_response="cancel"

|

||||||

)

|

)

|

||||||

entry.connect("activate", lambda dialog, old_chat_name=chat_name, entry=entry, label_element=label_element: rename_chat_response(self, dialog, None, old_chat_name, entry, label_element))

|

|

||||||

dialog.add_response("cancel", _("Cancel"))

|

dialog.add_response("cancel", _("Cancel"))

|

||||||

dialog.add_response("rename", _("Rename"))

|

dialog.add_response("rename", _("Rename"))

|

||||||

dialog.set_response_appearance("rename", Adw.ResponseAppearance.SUGGESTED)

|

dialog.set_response_appearance("rename", Adw.ResponseAppearance.SUGGESTED)

|

||||||

|

dialog.set_default_response("rename")

|

||||||

dialog.choose(

|

dialog.choose(

|

||||||

parent = self,

|

parent = self,

|

||||||

cancellable = None,

|

cancellable = None,

|

||||||

@@ -82,7 +90,8 @@ def rename_chat(self, chat_name, label_element):

|

|||||||

|

|

||||||

def new_chat_response(self, dialog, task, entry):

|

def new_chat_response(self, dialog, task, entry):

|

||||||

chat_name = _("New Chat")

|

chat_name = _("New Chat")

|

||||||

if entry is not None and entry.get_text() != "": chat_name = entry.get_text()

|

if entry is not None and entry.get_text() != "":

|

||||||

|

chat_name = entry.get_text()

|

||||||

if chat_name and (task is None or dialog.choose_finish(task) == "create"):

|

if chat_name and (task is None or dialog.choose_finish(task) == "create"):

|

||||||

self.new_chat(chat_name)

|

self.new_chat(chat_name)

|

||||||

|

|

||||||

@@ -95,10 +104,10 @@ def new_chat(self):

|

|||||||

extra_child=entry,

|

extra_child=entry,

|

||||||

close_response="cancel"

|

close_response="cancel"

|

||||||

)

|

)

|

||||||

entry.connect("activate", lambda dialog, entry: new_chat_response(self, dialog, None, entry))

|

|

||||||

dialog.add_response("cancel", _("Cancel"))

|

dialog.add_response("cancel", _("Cancel"))

|

||||||

dialog.add_response("create", _("Create"))

|

dialog.add_response("create", _("Create"))

|

||||||

dialog.set_response_appearance("create", Adw.ResponseAppearance.SUGGESTED)

|

dialog.set_response_appearance("create", Adw.ResponseAppearance.SUGGESTED)

|

||||||

|

dialog.set_default_response("create")

|

||||||

dialog.choose(

|

dialog.choose(

|

||||||

parent = self,

|

parent = self,

|

||||||

cancellable = None,

|

cancellable = None,

|

||||||

@@ -121,6 +130,7 @@ def stop_pull_model(self, model_name):

|

|||||||

dialog.add_response("cancel", _("Cancel"))

|

dialog.add_response("cancel", _("Cancel"))

|

||||||

dialog.add_response("stop", _("Stop"))

|

dialog.add_response("stop", _("Stop"))

|

||||||

dialog.set_response_appearance("stop", Adw.ResponseAppearance.DESTRUCTIVE)

|

dialog.set_response_appearance("stop", Adw.ResponseAppearance.DESTRUCTIVE)

|

||||||

|

dialog.set_default_response("stop")

|

||||||

dialog.choose(

|

dialog.choose(

|

||||||

parent = self.manage_models_dialog,

|

parent = self.manage_models_dialog,

|

||||||

cancellable = None,

|

cancellable = None,

|

||||||

@@ -142,6 +152,7 @@ def delete_model(self, model_name):

|

|||||||

dialog.add_response("cancel", _("Cancel"))

|

dialog.add_response("cancel", _("Cancel"))

|

||||||

dialog.add_response("delete", _("Delete"))

|

dialog.add_response("delete", _("Delete"))

|

||||||

dialog.set_response_appearance("delete", Adw.ResponseAppearance.DESTRUCTIVE)

|

dialog.set_response_appearance("delete", Adw.ResponseAppearance.DESTRUCTIVE)

|

||||||

|

dialog.set_default_response("delete")

|

||||||

dialog.choose(

|

dialog.choose(

|

||||||

parent = self.manage_models_dialog,

|

parent = self.manage_models_dialog,

|

||||||

cancellable = None,

|

cancellable = None,

|

||||||

@@ -164,6 +175,7 @@ def remove_attached_file(self, name):

|

|||||||

dialog.add_response("cancel", _("Cancel"))

|

dialog.add_response("cancel", _("Cancel"))

|

||||||

dialog.add_response("remove", _("Remove"))

|

dialog.add_response("remove", _("Remove"))

|

||||||

dialog.set_response_appearance("remove", Adw.ResponseAppearance.DESTRUCTIVE)

|

dialog.set_response_appearance("remove", Adw.ResponseAppearance.DESTRUCTIVE)

|

||||||

|

dialog.set_default_response("remove")

|

||||||

dialog.choose(

|

dialog.choose(

|

||||||

parent = self,

|

parent = self,

|

||||||

cancellable = None,

|

cancellable = None,

|

||||||

@@ -172,34 +184,46 @@ def remove_attached_file(self, name):

|

|||||||

|

|

||||||

# RECONNECT REMOTE | WORKS

|

# RECONNECT REMOTE | WORKS

|

||||||

|

|

||||||

def reconnect_remote_response(self, dialog, task, entry):

|

def reconnect_remote_response(self, dialog, task, url_entry, bearer_entry):

|

||||||

response = dialog.choose_finish(task)

|

response = dialog.choose_finish(task)

|

||||||

if not task or response == "remote":

|

if not task or response == "remote":

|

||||||

self.connect_remote(entry.get_text())

|

self.connect_remote(url_entry.get_text(), bearer_entry.get_text())

|

||||||

elif response == "local":

|

elif response == "local":

|

||||||