Compare commits

494 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

13d1572dd5 | ||

|

|

f2fa417194 | ||

|

|

4bf64c98e0 | ||

|

|

6fb36b1cc4 | ||

|

|

89b600f964 | ||

|

|

91c54a4565 | ||

|

|

c3b105c30b | ||

|

|

7c4c1e0997 | ||

|

|

f50c98befc | ||

|

|

98a0f60be9 | ||

|

|

d9e6b08fd7 | ||

|

|

d36f6b6644 | ||

|

|

e67d0bea83 | ||

|

|

218c10f4ad | ||

|

|

134a907eff | ||

|

|

97ee2e7a24 | ||

|

|

ed62aed6a4 | ||

|

|

b6ab989ac8 | ||

|

|

cefd758846 | ||

|

|

c114ae67ba | ||

|

|

0a7f7e5ac2 | ||

|

|

22db4a43d9 | ||

|

|

70e4d8f407 | ||

|

|

3da5207f53 | ||

|

|

f00122d789 | ||

|

|

6a19ca266e | ||

|

|

4f9aebf7a3 | ||

|

|

61f9e187bd | ||

|

|

27126736a4 | ||

|

|

c9cf2bfefc | ||

|

|

e03ea42be3 | ||

|

|

3253e67680 | ||

|

|

e189769f3f | ||

|

|

f6637493db | ||

|

|

063da38597 | ||

|

|

7587b03828 | ||

|

|

8fffb64f79 | ||

|

|

735eae0d0e | ||

|

|

8c98be6ef6 | ||

|

|

115e22e52c | ||

|

|

792a81ad03 | ||

|

|

2ea0ff6870 | ||

|

|

6242087152 | ||

|

|

1da6e31de1 | ||

|

|

cb4979ab7c | ||

|

|

da653c754d | ||

|

|

c4907b81fd | ||

|

|

4c104560d5 | ||

|

|

2253e378ac | ||

|

|

ba66ac40a3 | ||

|

|

40d0d92498 | ||

|

|

4ed6cf8e18 | ||

|

|

3fc1c74f51 | ||

|

|

150e8779c7 | ||

|

|

5462248565 | ||

|

|

0bc9f79f99 | ||

|

|

8bfa0830c1 | ||

|

|

ffefe2b141 | ||

|

|

c8679d6fa5 | ||

|

|

8fd8d920e6 | ||

|

|

202da99fa7 | ||

|

|

f084d6e447 | ||

|

|

9ab0084e18 | ||

|

|

e42eec3e31 | ||

|

|

e553215bf1 | ||

|

|

50eedc5326 | ||

|

|

f6975f1b6d | ||

|

|

35dac564b0 | ||

|

|

7e1a3713b5 | ||

|

|

a99d1f11c2 | ||

|

|

19523ba37a | ||

|

|

9bec816965 | ||

|

|

cbb7605851 | ||

|

|

c856b49268 | ||

|

|

fb04e4cb4f | ||

|

|

00527a6271 | ||

|

|

462657b7bb | ||

|

|

12fb88f3fd | ||

|

|

22af279548 | ||

|

|

00fc442348 | ||

|

|

32df119c60 | ||

|

|

ef8ec59977 | ||

|

|

3156c70260 | ||

|

|

7f042a906d | ||

|

|

ff5e663185 | ||

|

|

9d0daea052 | ||

|

|

31a10ee7d6 | ||

|

|

38abd208ff | ||

|

|

c34713eff5 | ||

|

|

d55aaf19a2 | ||

|

|

9f4b6faf28 | ||

|

|

57184f0a5c | ||

|

|

df568c7217 | ||

|

|

ed440d8935 | ||

|

|

70c183c71d | ||

|

|

b44d457bc5 | ||

|

|

15e4bbb62f | ||

|

|

5d95ba1c15 | ||

|

|

7e81200f80 | ||

|

|

b952fa07b5 | ||

|

|

15bd4335e8 | ||

|

|

d79a1236a0 | ||

|

|

ce11a308bf | ||

|

|

aa1fbcebe7 | ||

|

|

efbfb1e82a | ||

|

|

f497f1c5dc | ||

|

|

9ecf231307 | ||

|

|

66a9627b29 | ||

|

|

f03c01b6a6 | ||

|

|

29a5251d63 | ||

|

|

fcb956ff23 | ||

|

|

363fb882f3 | ||

|

|

e24cbb65b1 | ||

|

|

cf4d37a1c0 | ||

|

|

6394569b3b | ||

|

|

e6c855fcf9 | ||

|

|

c00061f46b | ||

|

|

67d572bd64 | ||

|

|

06769aba90 | ||

|

|

f5845e95e6 | ||

|

|

4d529619d6 | ||

|

|

95561f205c | ||

|

|

e855466280 | ||

|

|

c1c30c993c | ||

|

|

7da70097f2 | ||

|

|

01b6ae6bee | ||

|

|

9c1e0ea263 | ||

|

|

22116b0d1e | ||

|

|

03d92de88b | ||

|

|

0be0942da3 | ||

|

|

b488b64473 | ||

|

|

56eac5ccd6 | ||

|

|

ad5d6dfa41 | ||

|

|

c4fb424514 | ||

|

|

28e09d5c2e | ||

|

|

633507fecd | ||

|

|

5f3c01d231 | ||

|

|

4851b7858b | ||

|

|

d4f359bba7 | ||

|

|

40afce9fb0 | ||

|

|

61d2e7c7a0 | ||

|

|

0cb1891d9e | ||

|

|

5bd55843db | ||

|

|

5150fd769a | ||

|

|

d5eea3397c | ||

|

|

11b0b6a8d7 | ||

|

|

ecc93cda78 | ||

|

|

3653af7b81 | ||

|

|

bed097c760 | ||

|

|

f8d18afd13 | ||

|

|

991c01cba0 | ||

|

|

3e6a2b040f | ||

|

|

22138933f7 | ||

|

|

3d1a3a9ece | ||

|

|

0d5350b24d | ||

|

|

4fb83ed441 | ||

|

|

e8cfc9a9ee | ||

|

|

13a076bd9f | ||

|

|

63296219cf | ||

|

|

1ee36b113a | ||

|

|

dce91739e7 | ||

|

|

dd29077499 | ||

|

|

e398d55211 | ||

|

|

cbdfe43896 | ||

|

|

25eb1526d3 | ||

|

|

318f15925f | ||

|

|

95912e0211 | ||

|

|

f96b652605 | ||

|

|

24b1ff2e1b | ||

|

|

7e79f715b1 | ||

|

|

a061feeb71 | ||

|

|

12790b5ae1 | ||

|

|

2e2626fa99 | ||

|

|

3962315a6e | ||

|

|

08c0074ae5 | ||

|

|

295429acdf | ||

|

|

a842258e9e | ||

|

|

053efabfc8 | ||

|

|

a12083bfe9 | ||

|

|

672b8098bd | ||

|

|

db03cce49f | ||

|

|

e8b0733c32 | ||

|

|

68d970716f | ||

|

|

a0338bcccb | ||

|

|

eb92126e4b | ||

|

|

d26caea5f0 | ||

|

|

6d339aad5e | ||

|

|

e7b6da4f62 | ||

|

|

37e36add45 | ||

|

|

ed2501adf4 | ||

|

|

83db9fd9d4 | ||

|

|

6cb49cfc98 | ||

|

|

a928d2c074 | ||

|

|

4d35cea229 | ||

|

|

51d2326dee | ||

|

|

80dcae194b | ||

|

|

50759adb8e | ||

|

|

f46d16d257 | ||

|

|

5a9eeefaa7 | ||

|

|

f4f91d9aa1 | ||

|

|

ad5f73e985 | ||

|

|

d06ac2c2eb | ||

|

|

343411cd8c | ||

|

|

e14750db44 | ||

|

|

3be3b21f93 | ||

|

|

5c28245ff3 | ||

|

|

b433091e90 | ||

|

|

fe22f34a3d | ||

|

|

ef395a27a3 | ||

|

|

362e62ee36 | ||

|

|

80aabcb805 | ||

|

|

c283f3f1d2 | ||

|

|

603fdb8150 | ||

|

|

6e0ae393a4 | ||

|

|

abbdbf1abe | ||

|

|

a68973ece6 | ||

|

|

50aad8cb6d | ||

|

|

79a7840f24 | ||

|

|

19a8aade60 | ||

|

|

a591270d58 | ||

|

|

1087d3e336 | ||

|

|

fb7393fe5c | ||

|

|

d3159ae6ea | ||

|

|

c2c047d8b7 | ||

|

|

e897d6c931 | ||

|

|

3ceb25ccc6 | ||

|

|

9d740a7db9 | ||

|

|

4e77898487 | ||

|

|

c913a25679 | ||

|

|

758e055f1c | ||

|

|

a08be7351c | ||

|

|

08c0c54b98 | ||

|

|

687b99f9ab | ||

|

|

5801d43af9 | ||

|

|

5a28a16119 | ||

|

|

47e58d2ccd | ||

|

|

d33e9c53d8 | ||

|

|

8869296aed | ||

|

|

da4dd3341a | ||

|

|

15aa7fb844 | ||

|

|

d74f535968 | ||

|

|

521a95fc6b | ||

|

|

9548e2ec40 | ||

|

|

f1a3c7136f | ||

|

|

4542f26bb7 | ||

|

|

4926cb157e | ||

|

|

0d3b544a73 | ||

|

|

daf56c2de4 | ||

|

|

46e3921585 | ||

|

|

707984e20d | ||

|

|

cfb79a70be | ||

|

|

c32d0acfd4 | ||

|

|

38de4ac18e | ||

|

|

923c0e52e2 | ||

|

|

e753591b45 | ||

|

|

12e754e4bc | ||

|

|

eb3919ad63 | ||

|

|

1b94864422 | ||

|

|

51a90e0b79 | ||

|

|

7264902199 | ||

|

|

f08b03308e | ||

|

|

f831466d87 | ||

|

|

a54ec6fa9d | ||

|

|

ed3136cffd | ||

|

|

e37c5acbf9 | ||

|

|

9d2ad2eb3a | ||

|

|

d1a0d6375b | ||

|

|

ebf3af38c8 | ||

|

|

80b433e7a4 | ||

|

|

5da5c2c702 | ||

|

|

fe8626f650 | ||

|

|

ee998d978f | ||

|

|

cee360d5a2 | ||

|

|

5098babfd2 | ||

|

|

7026655116 | ||

|

|

01ba38a23b | ||

|

|

ef2c2650b0 | ||

|

|

a7d955a5bf | ||

|

|

129677d27c | ||

|

|

9ae011a31b | ||

|

|

abf1253980 | ||

|

|

e0c7e9c771 | ||

|

|

6a4c98ef18 | ||

|

|

809e23fb9c | ||

|

|

69eaa56240 | ||

|

|

df2a9d7b26 | ||

|

|

da97a7c6ee | ||

|

|

bb7a8b659a | ||

|

|

0999a64356 | ||

|

|

4647e1ba47 | ||

|

|

ea0caf03d6 | ||

|

|

1c9ce2a117 | ||

|

|

01b38fa37a | ||

|

|

80d1149932 | ||

|

|

cc1500f007 | ||

|

|

d0735de129 | ||

|

|

bbf678cb75 | ||

|

|

a44a7b5044 | ||

|

|

427ecd4499 | ||

|

|

0a5bb0b97f | ||

|

|

252b76e7eb | ||

|

|

5cf2be2b7d | ||

|

|

7f9a5eb516 | ||

|

|

9ecfbe8c3f | ||

|

|

377adc8699 | ||

|

|

8598f73be7 | ||

|

|

6f9b3b7c02 | ||

|

|

db198e10c0 | ||

|

|

d2271a7ade | ||

|

|

8d3d650ecf | ||

|

|

4545f5a1b2 | ||

|

|

62c1354f8b | ||

|

|

2bd99860f2 | ||

|

|

8026550f7a | ||

|

|

68c03176d4 | ||

|

|

ed54b2846a | ||

|

|

ff927d6c77 | ||

|

|

bd006da0c1 | ||

|

|

a409800279 | ||

|

|

5d89ccc729 | ||

|

|

fef3926ce3 | ||

|

|

c95be9611d | ||

|

|

1c4fc4341e | ||

|

|

3ddc172437 | ||

|

|

0d65cf1cbc | ||

|

|

cddcf496b2 | ||

|

|

9333b31444 | ||

|

|

cbd3e90073 | ||

|

|

a7b6e6bbce | ||

|

|

801c10fb77 | ||

|

|

50520b8474 | ||

|

|

b66e2102d3 | ||

|

|

8c0f1fd4d5 | ||

|

|

8b851d1b56 | ||

|

|

f36d6e1b29 | ||

|

|

eecac162ef | ||

|

|

82e7a3a9e1 | ||

|

|

f0505a0242 | ||

|

|

11dd13b430 | ||

|

|

b8fe222052 | ||

|

|

47d19a58aa | ||

|

|

fd67afbf33 | ||

|

|

d06e08a64e | ||

|

|

77b08d9e52 | ||

|

|

9451bf88d0 | ||

|

|

82bb50d663 | ||

|

|

edc3053774 | ||

|

|

1320ddb7d4 | ||

|

|

d95f06a230 | ||

|

|

938ace91c1 | ||

|

|

175cfad81c | ||

|

|

2f399dbb64 | ||

|

|

27558b85af | ||

|

|

bcc1f3fa65 | ||

|

|

fd92a86c5e | ||

|

|

3b95d369b8 | ||

|

|

a12920d801 | ||

|

|

2dd63df533 | ||

|

|

cea1aa5028 | ||

|

|

54b96d4e3a | ||

|

|

a470136476 | ||

|

|

7d35cb08dd | ||

|

|

1f03f1032e | ||

|

|

9e2b55a249 | ||

|

|

0fbb94cd72 | ||

|

|

004b3f8574 | ||

|

|

7d1931dd17 | ||

|

|

8b7f41afa7 | ||

|

|

4bc0832865 | ||

|

|

a66c6d5f40 | ||

|

|

33b7cae24d | ||

|

|

47f5c88ef2 | ||

|

|

ffe382aee2 | ||

|

|

919f71ee78 | ||

|

|

404d4476ae | ||

|

|

f2b243cd5f | ||

|

|

c2fae41355 | ||

|

|

8fda2cde9e | ||

|

|

930380cdce | ||

|

|

5b788ffe15 | ||

|

|

521c2bdde5 | ||

|

|

eee73b1218 | ||

|

|

87d6da26c9 | ||

|

|

2029cd5cd2 | ||

|

|

36be752ee6 | ||

|

|

5b3586789f | ||

|

|

6ce670e643 | ||

|

|

dd70e8139c | ||

|

|

3ac0936d1a | ||

|

|

1477bacf6a | ||

|

|

d339a18901 | ||

|

|

f9460416d9 | ||

|

|

a9112cf3da | ||

|

|

c873b49700 | ||

|

|

3c553e37d8 | ||

|

|

0c47fbb1f7 | ||

|

|

476138ef53 | ||

|

|

385ca4f0fa | ||

|

|

46fd642789 | ||

|

|

e48249c7c9 | ||

|

|

9e8535e97e | ||

|

|

a794c63a5a | ||

|

|

f3610a46a2 | ||

|

|

20fd2cf6e3 | ||

|

|

7bf345d09d | ||

|

|

17e9560449 | ||

|

|

c02e6a565e | ||

|

|

7fbc9b9bde | ||

|

|

416e97d488 | ||

|

|

753060d9f3 | ||

|

|

972c53000c | ||

|

|

2b948a49a0 | ||

|

|

7999548738 | ||

|

|

d4d13b793f | ||

|

|

210b6f0d89 | ||

|

|

7f5894b274 | ||

|

|

2dc24ab945 | ||

|

|

2f153c9974 | ||

|

|

fa22647acd | ||

|

|

dd5d82fe7a | ||

|

|

98b179aeb5 | ||

|

|

e1f1c005a0 | ||

|

|

6e226c5a4f | ||

|

|

7440fa5a37 | ||

|

|

4fe204605a | ||

|

|

4446b42b82 | ||

|

|

4b6cd17d0a | ||

|

|

1a6e74271c | ||

|

|

6ba3719031 | ||

|

|

dd95e3df7e | ||

|

|

69fd7853c8 | ||

|

|

c01c478ffe | ||

|

|

f8be1da83a | ||

|

|

3a7625486e | ||

|

|

fdc3b6c573 | ||

|

|

76939ed51f | ||

|

|

b9cf761f4a | ||

|

|

4c515ba541 | ||

|

|

d7c3595bf1 | ||

|

|

1fbd6a0824 | ||

|

|

ccb59c7f02 | ||

|

|

04bef3e82a | ||

|

|

17105b98ed | ||

|

|

4bff1515a9 | ||

|

|

0a75893346 | ||

|

|

2ed92467f9 | ||

|

|

634ac122d9 | ||

|

|

44640b7e53 | ||

|

|

47e7b22a7e | ||

|

|

918928d4bb | ||

|

|

69fc172779 | ||

|

|

d84dabbe4d | ||

|

|

23114210c4 | ||

|

|

ea80e5a223 | ||

|

|

6087f31d41 | ||

|

|

30ee292a32 | ||

|

|

705a9319f5 | ||

|

|

c789d9d87c | ||

|

|

a7681b5505 | ||

|

|

9e74d8af0b | ||

|

|

b52061f849 | ||

|

|

01b875c283 | ||

|

|

4cc3b78321 | ||

|

|

6205db87e6 | ||

|

|

518633b153 | ||

|

|

988ee7b7e7 | ||

|

|

cdadde60ce | ||

|

|

4bb01d86d9 | ||

|

|

4cac43520f | ||

|

|

d6dddd16f1 | ||

|

|

c0da054635 | ||

|

|

2b4d94ca55 | ||

|

|

e8e564738a | ||

|

|

d48fbd8b62 | ||

|

|

c1f80f209e | ||

|

|

ed6b32c827 | ||

|

|

fc436fd352 | ||

|

|

ee6fdb1ca1 | ||

|

|

988db30355 | ||

|

|

ea98ee5e99 | ||

|

|

b8d1d43822 | ||

|

|

0d017c6d14 | ||

|

|

2825e9a003 | ||

|

|

6e9ddfcbf2 | ||

|

|

378689be39 | ||

|

|

31858fad12 | ||

|

|

60351d629d | ||

|

|

715a97159a | ||

|

|

b48ce28b35 | ||

|

|

7ab0448cd3 | ||

|

|

5f6642fa63 | ||

|

|

5a0d1ed408 | ||

|

|

131e8fb6be | ||

|

|

1c7fb8ef93 |

8

.github/ISSUE_TEMPLATE/bug_report.md

vendored

8

.github/ISSUE_TEMPLATE/bug_report.md

vendored

@ -6,7 +6,7 @@ labels: bug

|

||||

assignees: ''

|

||||

|

||||

---

|

||||

|

||||

<!--Please be aware that GNOME Code of Conduct applies to Alpaca, https://conduct.gnome.org/-->

|

||||

**Describe the bug**

|

||||

A clear and concise description of what the bug is.

|

||||

|

||||

@ -16,5 +16,7 @@ A clear and concise description of what you expected to happen.

|

||||

**Screenshots**

|

||||

If applicable, add screenshots to help explain your problem.

|

||||

|

||||

**Additional context**

|

||||

Add any other context about the problem here.

|

||||

**Debugging information**

|

||||

```

|

||||

Please paste here the debugging information available at 'About Alpaca' > 'Troubleshooting' > 'Debugging Information'

|

||||

```

|

||||

|

||||

2

.github/ISSUE_TEMPLATE/feature_request.md

vendored

2

.github/ISSUE_TEMPLATE/feature_request.md

vendored

@ -6,7 +6,7 @@ labels: enhancement

|

||||

assignees: ''

|

||||

|

||||

---

|

||||

|

||||

<!--Please be aware that GNOME Code of Conduct applies to Alpaca, https://conduct.gnome.org/-->

|

||||

**Is your feature request related to a problem? Please describe.**

|

||||

A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

|

||||

|

||||

|

||||

18

.github/workflows/flatpak-builder.yml

vendored

Normal file

18

.github/workflows/flatpak-builder.yml

vendored

Normal file

@ -0,0 +1,18 @@

|

||||

# .github/workflows/flatpak-build.yml

|

||||

on:

|

||||

workflow_dispatch:

|

||||

name: Flatpak Build

|

||||

jobs:

|

||||

flatpak:

|

||||

name: "Flatpak"

|

||||

runs-on: ubuntu-latest

|

||||

container:

|

||||

image: bilelmoussaoui/flatpak-github-actions:gnome-46

|

||||

options: --privileged

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

- uses: flatpak/flatpak-github-actions/flatpak-builder@v6

|

||||

with:

|

||||

bundle: com.jeffser.Alpaca.flatpak

|

||||

manifest-path: com.jeffser.Alpaca.json

|

||||

cache-key: flatpak-builder-${{ github.sha }}

|

||||

24

.github/workflows/pylint.yml

vendored

Normal file

24

.github/workflows/pylint.yml

vendored

Normal file

@ -0,0 +1,24 @@

|

||||

name: Pylint

|

||||

|

||||

on:

|

||||

workflow_dispatch:

|

||||

|

||||

jobs:

|

||||

build:

|

||||

runs-on: ubuntu-latest

|

||||

strategy:

|

||||

matrix:

|

||||

python-version: ["3.11"]

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

- name: Set up Python ${{ matrix.python-version }}

|

||||

uses: actions/setup-python@v3

|

||||

with:

|

||||

python-version: ${{ matrix.python-version }}

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

python -m pip install --upgrade pip

|

||||

pip install pylint

|

||||

- name: Analysing the code with pylint

|

||||

run: |

|

||||

pylint --rcfile=.pylintrc $(git ls-files '*.py' | grep -v 'src/available_models_descriptions.py')

|

||||

14

.pylintrc

Normal file

14

.pylintrc

Normal file

@ -0,0 +1,14 @@

|

||||

[MASTER]

|

||||

|

||||

[MESSAGES CONTROL]

|

||||

disable=undefined-variable, line-too-long, missing-function-docstring, consider-using-f-string, import-error

|

||||

|

||||

[FORMAT]

|

||||

max-line-length=200

|

||||

|

||||

# Reasons for removing some checks:

|

||||

# undefined-variable: _() is used by the translator on build time but it is not defined on the scripts

|

||||

# line-too-long: I... I'm too lazy to make the lines shorter, maybe later

|

||||

# missing-function-docstring I'm not adding a docstring to all the functions, most are self explanatory

|

||||

# consider-using-f-string I can't use f-string because of the translator

|

||||

# import-error The linter doesn't have access to all the libraries that the project itself does

|

||||

34

Alpaca.doap

Normal file

34

Alpaca.doap

Normal file

@ -0,0 +1,34 @@

|

||||

<Project xmlns:rdf="http://www.w3.org/1999/02/22-rdf-syntax-ns#"

|

||||

xmlns:rdfs="http://www.w3.org/2000/01/rdf-schema#"

|

||||

xmlns:foaf="http://xmlns.com/foaf/0.1/"

|

||||

xmlns:gnome="http://api.gnome.org/doap-extensions#"

|

||||

xmlns="http://usefulinc.com/ns/doap#">

|

||||

|

||||

<name xml:lang="en">Alpaca</name>

|

||||

<shortdesc xml:lang="en">An Ollama client made with GTK4 and Adwaita</shortdesc>

|

||||

<homepage rdf:resource="https://jeffser.com/alpaca" />

|

||||

<bug-database rdf:resource="https://github.com/Jeffser/Alpaca/issues"/>

|

||||

<programming-language>Python</programming-language>

|

||||

|

||||

<platform>GTK 4</platform>

|

||||

<platform>Libadwaita</platform>

|

||||

|

||||

<maintainer>

|

||||

<foaf:Person>

|

||||

<foaf:name>Jeffry Samuel</foaf:name>

|

||||

<foaf:mbox rdf:resource="mailto:jeffrysamuer@gmail.com"/>

|

||||

<foaf:account>

|

||||

<foaf:OnlineAccount>

|

||||

<foaf:accountServiceHomepage rdf:resource="https://github.com"/>

|

||||

<foaf:accountName>jeffser</foaf:accountName>

|

||||

</foaf:OnlineAccount>

|

||||

</foaf:account>

|

||||

<foaf:account>

|

||||

<foaf:OnlineAccount>

|

||||

<foaf:accountServiceHomepage rdf:resource="https://gitlab.gnome.org"/>

|

||||

<foaf:accountName>jeffser</foaf:accountName>

|

||||

</foaf:OnlineAccount>

|

||||

</foaf:account>

|

||||

</foaf:Person>

|

||||

</maintainer>

|

||||

</Project>

|

||||

4

CODE_OF_CONDUCT.md

Normal file

4

CODE_OF_CONDUCT.md

Normal file

@ -0,0 +1,4 @@

|

||||

Alpaca follows [GNOME's code of conduct](https://conduct.gnome.org/), please make sure to read it before interacting in any way with this repository.

|

||||

To report any misconduct please reach out via private message on

|

||||

- X (formally Twitter): [@jeffrysamuer](https://x.com/jeffrysamuer)

|

||||

- Mastodon: [@jeffser@floss.social](https://floss.social/@jeffser)

|

||||

30

CONTRIBUTING.md

Normal file

30

CONTRIBUTING.md

Normal file

@ -0,0 +1,30 @@

|

||||

# Contributing Rules

|

||||

|

||||

## Translations

|

||||

|

||||

If you want to translate or contribute on existing translations please read [this discussion](https://github.com/Jeffser/Alpaca/discussions/153).

|

||||

|

||||

## Code

|

||||

|

||||

1) Before contributing code make sure there's an open [issue](https://github.com/Jeffser/Alpaca/issues) for that particular problem or feature.

|

||||

2) Ask to contribute on the responses to the issue.

|

||||

3) Wait for [my](https://github.com/Jeffser) approval, I might have already started working on that issue.

|

||||

4) Test your code before submitting a pull request.

|

||||

|

||||

## Q&A

|

||||

|

||||

### Do I need to comment my code?

|

||||

|

||||

There's no need to add comments if the code is easy to read by itself.

|

||||

|

||||

### What if I need help or I don't understand the existing code?

|

||||

|

||||

You can reach out on the issue, I'll try to answer as soon as possible.

|

||||

|

||||

### What IDE should I use?

|

||||

|

||||

I use Gnome Builder but you can use whatever you want.

|

||||

|

||||

### Can I be credited?

|

||||

|

||||

You might be credited on the GitHub repository in the [thanks](https://github.com/Jeffser/Alpaca/blob/main/README.md#thanks) section of the README.

|

||||

91

README.md

91

README.md

@ -11,7 +11,11 @@ Alpaca is an [Ollama](https://github.com/ollama/ollama) client where you can man

|

||||

> [!WARNING]

|

||||

> This project is not affiliated at all with Ollama, I'm not responsible for any damages to your device or software caused by running code given by any AI models.

|

||||

|

||||

> [!IMPORTANT]

|

||||

> Please be aware that [GNOME Code of Conduct](https://conduct.gnome.org) applies to Alpaca before interacting with this repository.

|

||||

|

||||

## Features!

|

||||

|

||||

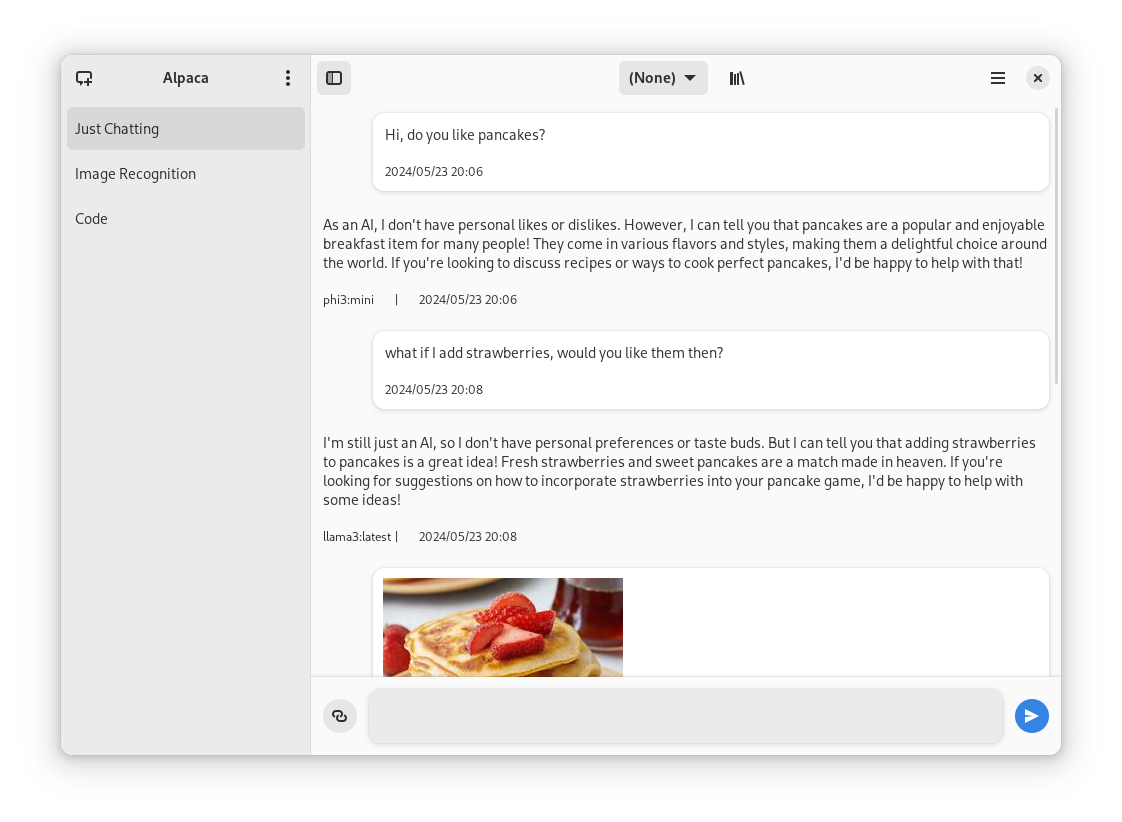

- Talk to multiple models in the same conversation

|

||||

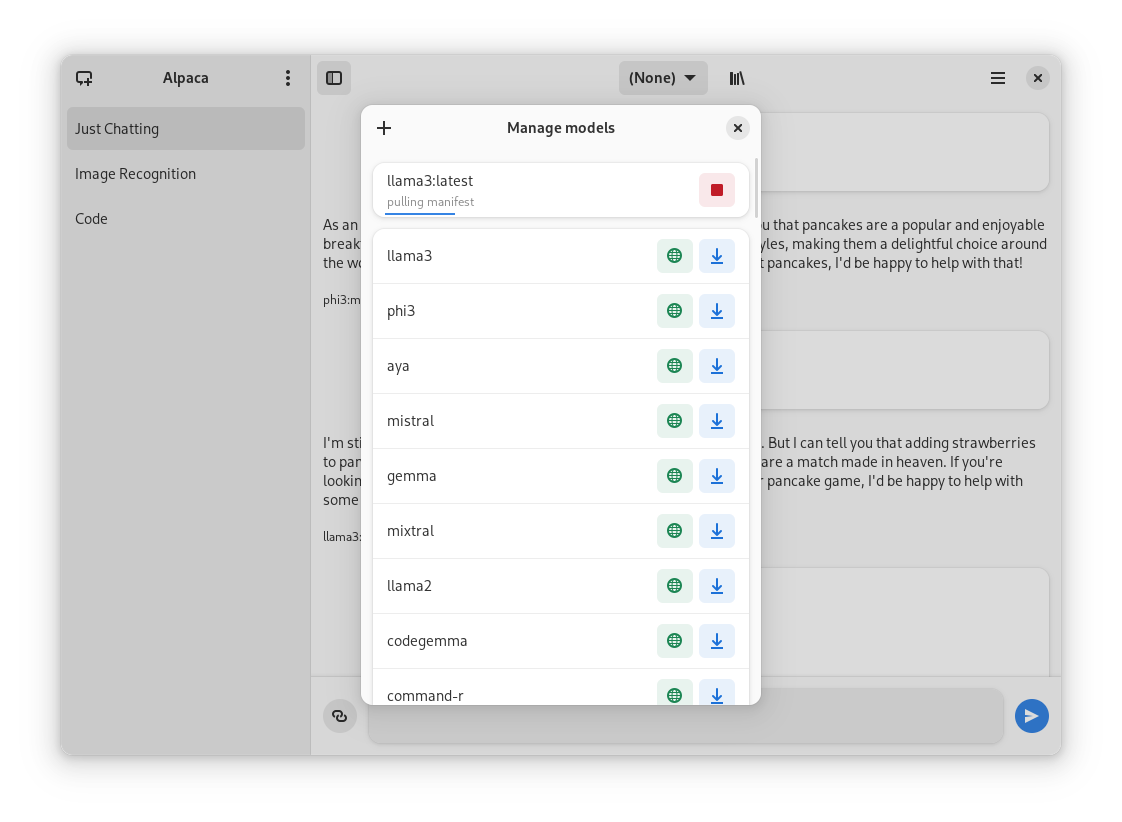

- Pull and delete models from the app

|

||||

- Image recognition

|

||||

@ -21,47 +25,84 @@ Alpaca is an [Ollama](https://github.com/ollama/ollama) client where you can man

|

||||

- Notifications

|

||||

- Import / Export chats

|

||||

- Delete / Edit messages

|

||||

- Regenerate messages

|

||||

- YouTube recognition (Ask questions about a YouTube video using the transcript)

|

||||

- Website recognition (Ask questions about a certain website by parsing the url)

|

||||

|

||||

## Screenies

|

||||

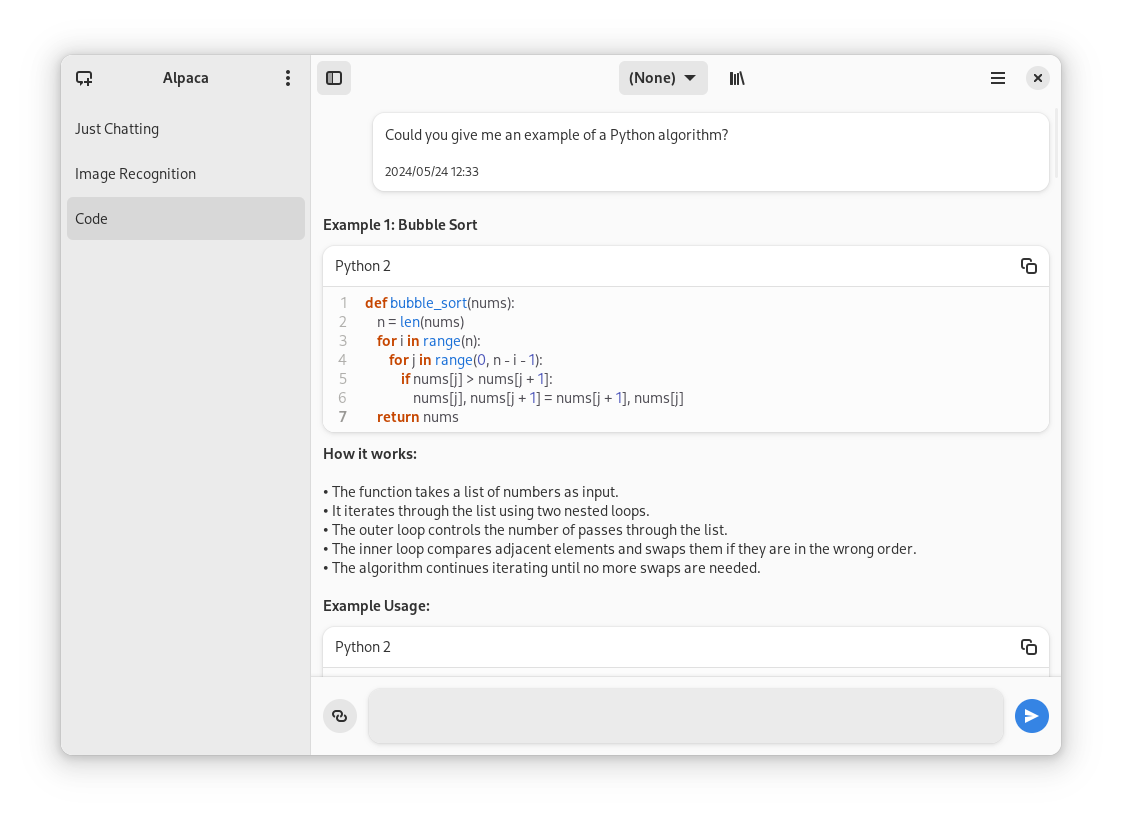

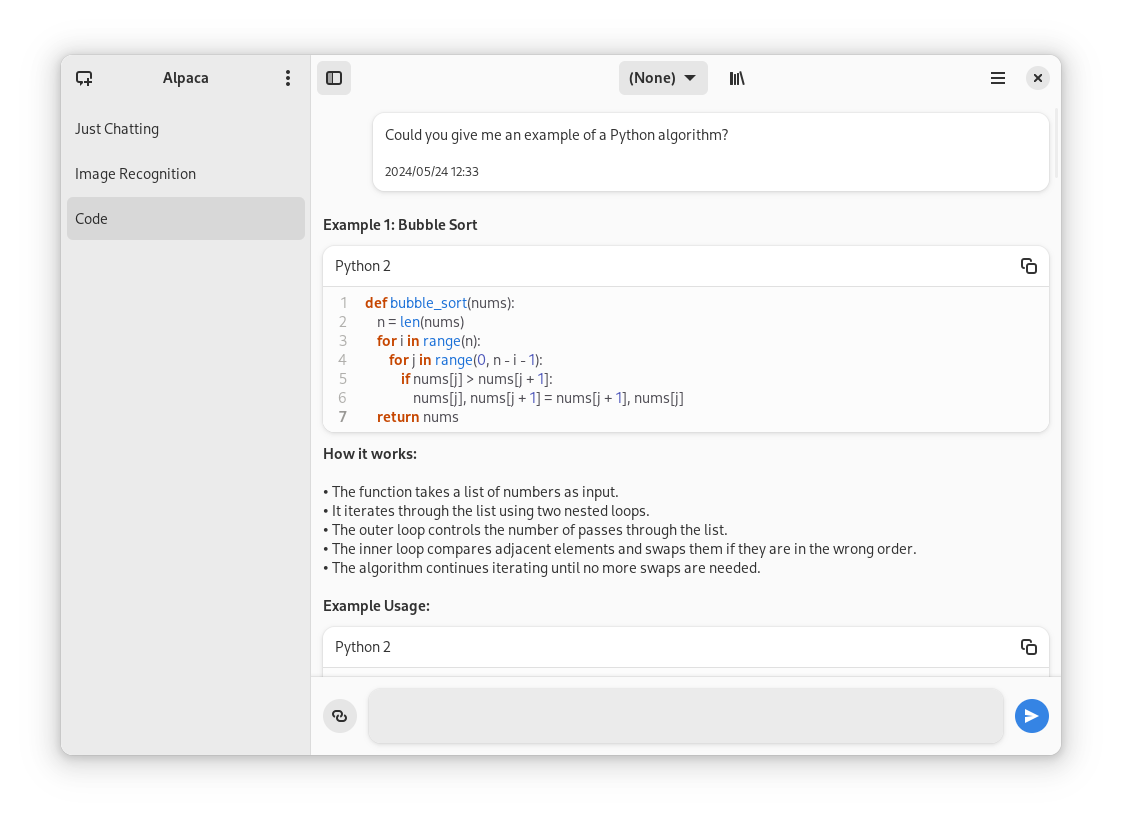

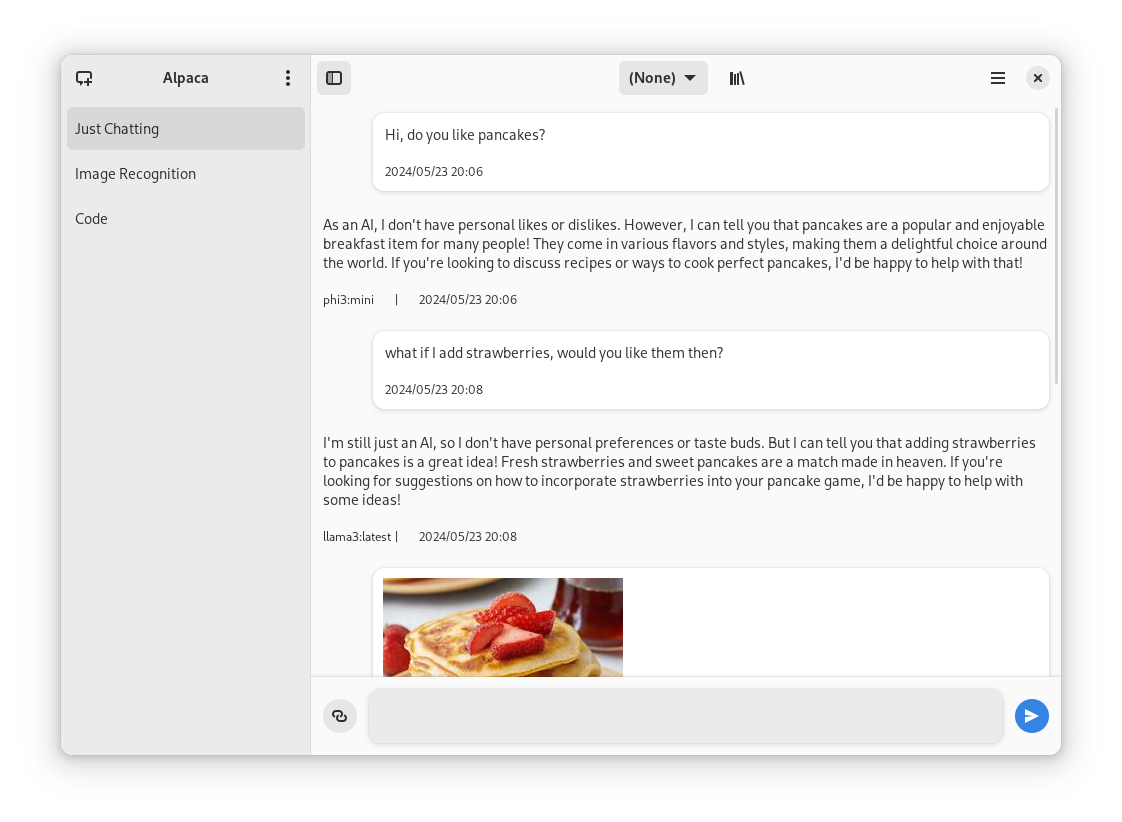

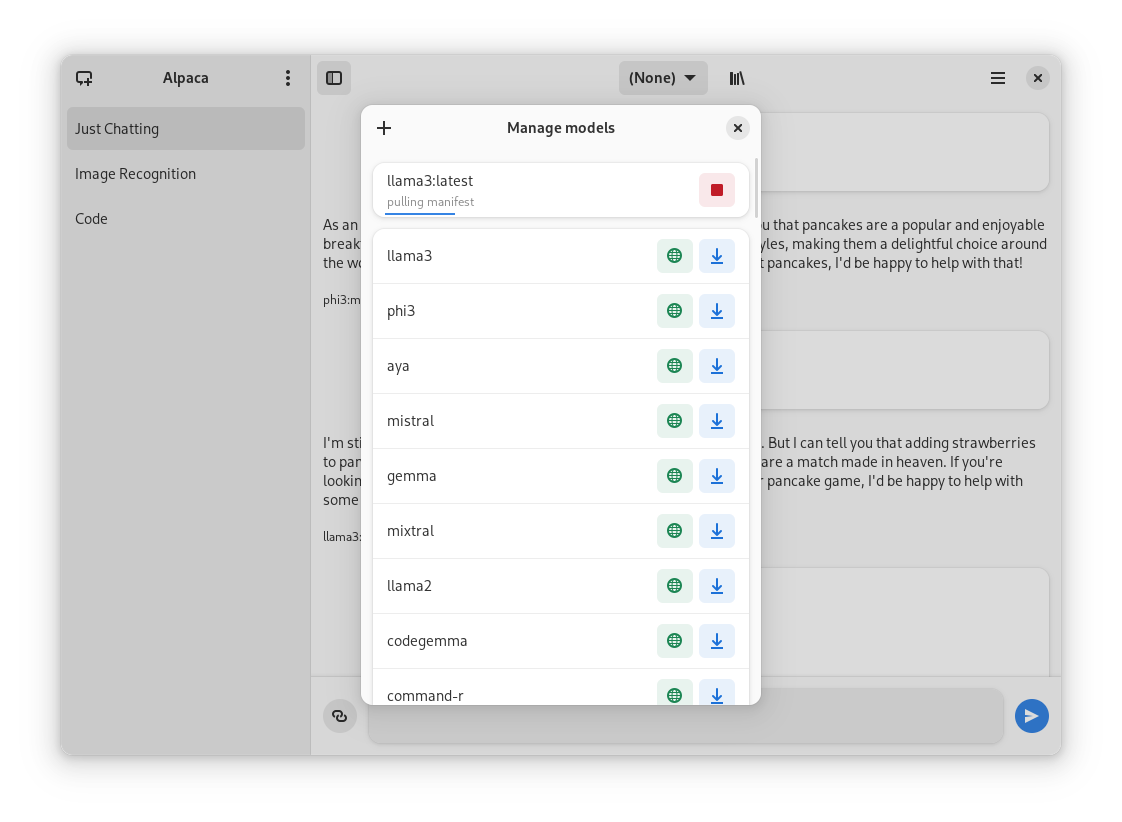

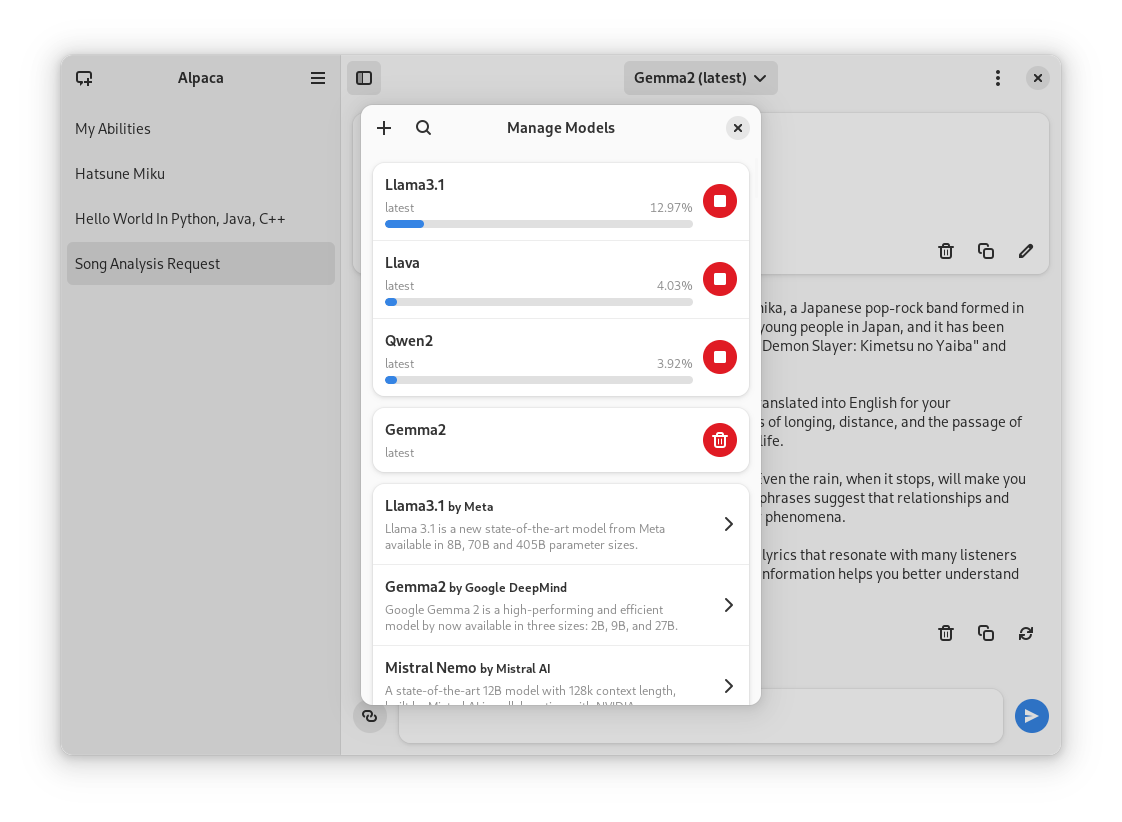

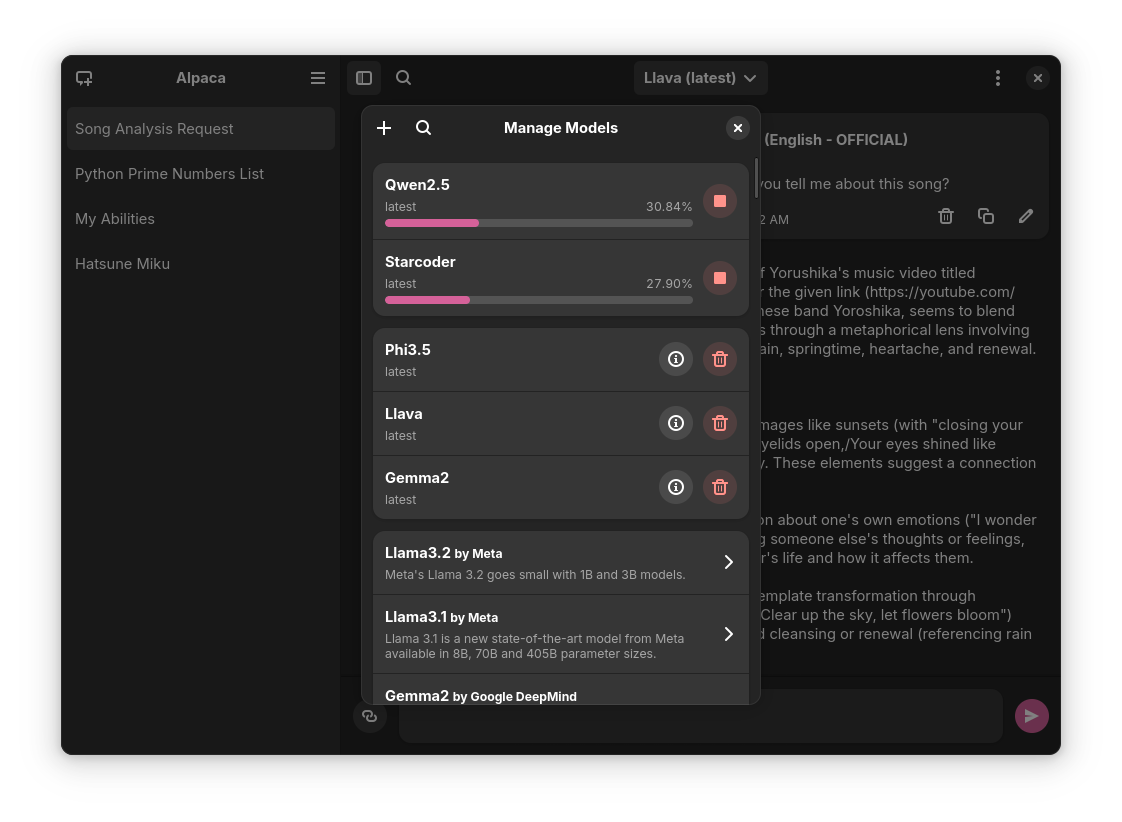

Chatting with a model | Image recognition | Code highlighting

|

||||

:--------------------:|:-----------------:|:----------------------:

|

||||

|  |

|

||||

|

||||

## Preview

|

||||

1. Clone repo using Gnome Builder

|

||||

2. Press the `run` button

|

||||

Normal conversation | Image recognition | Code highlighting | YouTube transcription | Model management

|

||||

:------------------:|:-----------------:|:-----------------:|:---------------------:|:----------------:

|

||||

|  |  |  |

|

||||

|

||||

## Instalation

|

||||

1. Go to the `releases` page

|

||||

2. Download the latest flatpak package

|

||||

3. Open it

|

||||

## Installation

|

||||

|

||||

## Ollama session tips

|

||||

### Flathub

|

||||

|

||||

### Change the port of the integrated Ollama instance

|

||||

Go to `~/.var/app/com.jeffser.Alpaca/config/server.json` and change the `"local_port"` value, by default it is `11435`.

|

||||

You can find the latest stable version of the app on [Flathub](https://flathub.org/apps/com.jeffser.Alpaca)

|

||||

|

||||

### Backup all the chats

|

||||

The chat data is located in `~/.var/app/com.jeffser.Alpaca/data/chats` you can copy that directory wherever you want to.

|

||||

### Flatpak Package

|

||||

|

||||

### Force showing the welcome dialog

|

||||

To do that you just need to delete the file `~/.var/app/com.jeffser.Alpaca/config/server.json`, this won't affect your saved chats or models.

|

||||

Everytime a new version is published they become available on the [releases page](https://github.com/Jeffser/Alpaca/releases) of the repository

|

||||

|

||||

### Add/Change environment variables for Ollama

|

||||

You can change anything except `$HOME` and `$OLLAMA_HOST`, to do this go to `~/.var/app/com.jeffser.Alpaca/config/server.json` and change `ollama_overrides` accordingly, some overrides are available to change on the GUI.

|

||||

### Snap Package

|

||||

|

||||

You can also find the Snap package on the [releases page](https://github.com/Jeffser/Alpaca/releases), to install it run this command:

|

||||

```BASH

|

||||

sudo snap install ./{package name} --dangerous

|

||||

```

|

||||

The `--dangerous` comes from the package being installed without any involvement of the SnapStore, I'm working on getting the app there, but for now you can test the app this way.

|

||||

|

||||

### Building Git Version

|

||||

|

||||

Note: This is not recommended since the prerelease versions of the app often present errors and general instability.

|

||||

|

||||

1. Clone the project

|

||||

2. Open with Gnome Builder

|

||||

3. Press the run button (or export if you want to build a Flatpak package)

|

||||

|

||||

## Translators

|

||||

|

||||

Language | Contributors

|

||||

:----------------------|:-----------

|

||||

🇷🇺 Russian | [Alex K](https://github.com/alexkdeveloper)

|

||||

🇪🇸 Spanish | [Jeffry Samuel](https://github.com/jeffser)

|

||||

🇫🇷 French | [Louis Chauvet-Villaret](https://github.com/loulou64490) , [Théo FORTIN](https://github.com/topiga)

|

||||

🇧🇷 Brazilian Portuguese | [Daimar Stein](https://github.com/not-a-dev-stein) , [Bruno Antunes](https://github.com/antun3s)

|

||||

🇳🇴 Norwegian | [CounterFlow64](https://github.com/CounterFlow64)

|

||||

🇮🇳 Bengali | [Aritra Saha](https://github.com/olumolu)

|

||||

🇨🇳 Simplified Chinese | [Yuehao Sui](https://github.com/8ar10der) , [Aleksana](https://github.com/Aleksanaa)

|

||||

🇮🇳 Hindi | [Aritra Saha](https://github.com/olumolu)

|

||||

🇹🇷 Turkish | [YusaBecerikli](https://github.com/YusaBecerikli)

|

||||

🇺🇦 Ukrainian | [Simon](https://github.com/OriginalSimon)

|

||||

🇩🇪 German | [Marcel Margenberg](https://github.com/MehrzweckMandala)

|

||||

🇮🇱 Hebrew | [Yosef Or Boczko](https://github.com/yoseforb)

|

||||

🇮🇳 Telugu | [Aryan Karamtoth](https://github.com/SpaciousCoder78)

|

||||

|

||||

Want to add a language? Visit [this discussion](https://github.com/Jeffser/Alpaca/discussions/153) to get started!

|

||||

|

||||

---

|

||||

|

||||

## Thanks

|

||||

- [not-a-dev-stein](https://github.com/not-a-dev-stein) for their help with requesting a new icon, bug reports and the translation to Brazilian Portuguese

|

||||

|

||||

- [not-a-dev-stein](https://github.com/not-a-dev-stein) for their help with requesting a new icon and bug reports

|

||||

- [TylerLaBree](https://github.com/TylerLaBree) for their requests and ideas

|

||||

- [Alexkdeveloper](https://github.com/alexkdeveloper) for their help translating the app to Russian

|

||||

- [Imbev](https://github.com/imbev) for their reports and suggestions

|

||||

- [Nokse](https://github.com/Nokse22) for their contributions to the UI and table rendering

|

||||

- [Louis Chauvet-Villaret](https://github.com/loulou64490) for their suggestions and help translating the app to French

|

||||

- [CounterFlow64](https://github.com/CounterFlow64) for their help translating the app to Norwegian

|

||||

- [Louis Chauvet-Villaret](https://github.com/loulou64490) for their suggestions

|

||||

- [Aleksana](https://github.com/Aleksanaa) for her help with better handling of directories

|

||||

- [Gnome Builder Team](https://gitlab.gnome.org/GNOME/gnome-builder) for the awesome IDE I use to develop Alpaca

|

||||

- Sponsors for giving me enough money to be able to take a ride to my campus every time I need to <3

|

||||

- Everyone that has shared kind words of encouragement!

|

||||

|

||||

## About forks

|

||||

If you want to fork this... I mean, I think it would be better if you start from scratch, my code isn't well documented at all, but if you really want to, please give me some credit, that's all I ask for... And maybe a donation (joke)

|

||||

---

|

||||

|

||||

## Dependencies

|

||||

|

||||

- [Requests](https://github.com/psf/requests)

|

||||

- [Pillow](https://github.com/python-pillow/Pillow)

|

||||

- [Pypdf](https://github.com/py-pdf/pypdf)

|

||||

- [Pytube](https://github.com/pytube/pytube)

|

||||

- [Html2Text](https://github.com/aaronsw/html2text)

|

||||

- [Ollama](https://github.com/ollama/ollama)

|

||||

- [Numactl](https://github.com/numactl/numactl)

|

||||

|

||||

12

SECURITY.md

Normal file

12

SECURITY.md

Normal file

@ -0,0 +1,12 @@

|

||||

# Security Policy

|

||||

|

||||

## Supported Packaging

|

||||

|

||||

Alpaca only supports [Flatpak](https://flatpak.org/) packaging officially, any other packaging methods might not behave as expected.

|

||||

|

||||

## Official Versions

|

||||

|

||||

The only ways Alpaca is being distributed officially are:

|

||||

|

||||

- [Alpaca's GitHub Repository Releases Page](https://github.com/Jeffser/Alpaca/releases)

|

||||

- [Flathub](https://flathub.org/apps/com.jeffser.Alpaca)

|

||||

@ -1,16 +1,28 @@

|

||||

{

|

||||

"id" : "com.jeffser.Alpaca",

|

||||

"runtime" : "org.gnome.Platform",

|

||||

"runtime-version" : "46",

|

||||

"runtime-version" : "47",

|

||||

"sdk" : "org.gnome.Sdk",

|

||||

"command" : "alpaca",

|

||||

"finish-args" : [

|

||||

"--share=network",

|

||||

"--share=ipc",

|

||||

"--socket=fallback-x11",

|

||||

"--device=dri",

|

||||

"--socket=wayland"

|

||||

"--device=all",

|

||||

"--socket=wayland",

|

||||

"--filesystem=/sys/module/amdgpu:ro",

|

||||

"--env=LD_LIBRARY_PATH=/app/lib:/usr/lib/x86_64-linux-gnu/GL/default/lib:/usr/lib/x86_64-linux-gnu/openh264/extra:/usr/lib/x86_64-linux-gnu/openh264/extra:/usr/lib/sdk/llvm15/lib:/usr/lib/x86_64-linux-gnu/GL/default/lib:/usr/lib/ollama:/app/plugins/AMD/lib/ollama",

|

||||

"--env=GSK_RENDERER=ngl"

|

||||

],

|

||||

"add-extensions": {

|

||||

"com.jeffser.Alpaca.Plugins": {

|

||||

"add-ld-path": "/app/plugins/AMD/lib/ollama",

|

||||

"directory": "plugins",

|

||||

"no-autodownload": true,

|

||||

"autodelete": true,

|

||||

"subdirectories": true

|

||||

}

|

||||

},

|

||||

"cleanup" : [

|

||||

"/include",

|

||||

"/lib/pkgconfig",

|

||||

@ -99,6 +111,45 @@

|

||||

}

|

||||

]

|

||||

},

|

||||

{

|

||||

"name": "python3-youtube-transcript-api",

|

||||

"buildsystem": "simple",

|

||||

"build-commands": [

|

||||

"pip3 install --verbose --exists-action=i --no-index --find-links=\"file://${PWD}\" --prefix=${FLATPAK_DEST} \"youtube-transcript-api\" --no-build-isolation"

|

||||

],

|

||||

"sources": [

|

||||

{

|

||||

"type": "file",

|

||||

"url": "https://files.pythonhosted.org/packages/12/90/3c9ff0512038035f59d279fddeb79f5f1eccd8859f06d6163c58798b9487/certifi-2024.8.30-py3-none-any.whl",

|

||||

"sha256": "922820b53db7a7257ffbda3f597266d435245903d80737e34f8a45ff3e3230d8"

|

||||

},

|

||||

{

|

||||

"type": "file",

|

||||

"url": "https://files.pythonhosted.org/packages/f2/4f/e1808dc01273379acc506d18f1504eb2d299bd4131743b9fc54d7be4df1e/charset_normalizer-3.4.0.tar.gz",

|

||||

"sha256": "223217c3d4f82c3ac5e29032b3f1c2eb0fb591b72161f86d93f5719079dae93e"

|

||||

},

|

||||

{

|

||||

"type": "file",

|

||||

"url": "https://files.pythonhosted.org/packages/76/c6/c88e154df9c4e1a2a66ccf0005a88dfb2650c1dffb6f5ce603dfbd452ce3/idna-3.10-py3-none-any.whl",

|

||||

"sha256": "946d195a0d259cbba61165e88e65941f16e9b36ea6ddb97f00452bae8b1287d3"

|

||||

},

|

||||

{

|

||||

"type": "file",

|

||||

"url": "https://files.pythonhosted.org/packages/f9/9b/335f9764261e915ed497fcdeb11df5dfd6f7bf257d4a6a2a686d80da4d54/requests-2.32.3-py3-none-any.whl",

|

||||

"sha256": "70761cfe03c773ceb22aa2f671b4757976145175cdfca038c02654d061d6dcc6"

|

||||

},

|

||||

{

|

||||

"type": "file",

|

||||

"url": "https://files.pythonhosted.org/packages/ce/d9/5f4c13cecde62396b0d3fe530a50ccea91e7dfc1ccf0e09c228841bb5ba8/urllib3-2.2.3-py3-none-any.whl",

|

||||

"sha256": "ca899ca043dcb1bafa3e262d73aa25c465bfb49e0bd9dd5d59f1d0acba2f8fac"

|

||||

},

|

||||

{

|

||||

"type": "file",

|

||||

"url": "https://files.pythonhosted.org/packages/52/42/5f57d37d56bdb09722f226ed81cc1bec63942da745aa27266b16b0e16a5d/youtube_transcript_api-0.6.2-py3-none-any.whl",

|

||||

"sha256": "019dbf265c6a68a0591c513fff25ed5a116ce6525832aefdfb34d4df5567121c"

|

||||

}

|

||||

]

|

||||

},

|

||||

{

|

||||

"name": "python3-html2text",

|

||||

"buildsystem": "simple",

|

||||

@ -117,27 +168,56 @@

|

||||

"name": "ollama",

|

||||

"buildsystem": "simple",

|

||||

"build-commands": [

|

||||

"install -Dm0755 ollama* ${FLATPAK_DEST}/bin/ollama"

|

||||

"cp -r --remove-destination * ${FLATPAK_DEST}/",

|

||||

"mkdir ${FLATPAK_DEST}/plugins"

|

||||

],

|

||||

"sources": [

|

||||

{

|

||||

"type": "file",

|

||||

"url": "https://github.com/ollama/ollama/releases/download/v0.3.0/ollama-linux-amd64",

|

||||

"sha256": "b8817c34882c7ac138565836ac1995a2c61261a79315a13a0aebbfe5435da855",

|

||||

"type": "archive",

|

||||

"url": "https://github.com/ollama/ollama/releases/download/v0.3.12/ollama-linux-amd64.tgz",

|

||||

"sha256": "f0efa42f7ad77cd156bd48c40cd22109473801e5113173b0ad04f094a4ef522b",

|

||||

"only-arches": [

|

||||

"x86_64"

|

||||

]

|

||||

},

|

||||

{

|

||||

"type": "file",

|

||||

"url": "https://github.com/ollama/ollama/releases/download/v0.3.0/ollama-linux-arm64",

|

||||

"sha256": "64be908749212052146f1008dd3867359c776ac1766e8d86291886f53d294d4d",

|

||||

"type": "archive",

|

||||

"url": "https://github.com/ollama/ollama/releases/download/v0.3.12/ollama-linux-arm64.tgz",

|

||||

"sha256": "da631cbe4dd2c168dae58d6868b1ff60e881e050f2d07578f2f736e689fec04c",

|

||||

"only-arches": [

|

||||

"aarch64"

|

||||

]

|

||||

}

|

||||

]

|

||||

},

|

||||

{

|

||||

"name": "libnuma",

|

||||

"buildsystem": "autotools",

|

||||

"build-commands": [

|

||||

"autoreconf -i",

|

||||

"make",

|

||||

"make install"

|

||||

],

|

||||

"sources": [

|

||||

{

|

||||

"type": "archive",

|

||||

"url": "https://github.com/numactl/numactl/releases/download/v2.0.18/numactl-2.0.18.tar.gz",

|

||||

"sha256": "b4fc0956317680579992d7815bc43d0538960dc73aa1dd8ca7e3806e30bc1274"

|

||||

}

|

||||

]

|

||||

},

|

||||

{

|

||||

"name": "vte",

|

||||

"buildsystem": "meson",

|

||||

"config-opts": ["-Dvapi=false"],

|

||||

"sources": [

|

||||

{

|

||||

"type": "archive",

|

||||

"url": "https://gitlab.gnome.org/GNOME/vte/-/archive/0.78.0/vte-0.78.0.tar.gz",

|

||||

"sha256": "82e19d11780fed4b66400f000829ce5ca113efbbfb7975815f26ed93e4c05f2d"

|

||||

}

|

||||

]

|

||||

},

|

||||

{

|

||||

"name" : "alpaca",

|

||||

"builddir" : true,

|

||||

@ -145,7 +225,7 @@

|

||||

"sources" : [

|

||||

{

|

||||

"type" : "git",

|

||||

"url" : "file:///home/tentri/Documents/Alpaca",

|

||||

"url": "https://github.com/Jeffser/Alpaca.git",

|

||||

"branch" : "main"

|

||||

}

|

||||

]

|

||||

|

||||

@ -5,5 +5,6 @@ Icon=com.jeffser.Alpaca

|

||||

Terminal=false

|

||||

Type=Application

|

||||

Categories=Utility;Development;Chat;

|

||||

Keywords=ai;ollama;llm

|

||||

StartupNotify=true

|

||||

X-Purism-FormFactor=Workstation;Mobile;

|

||||

@ -63,16 +63,18 @@

|

||||

</screenshot>

|

||||

<screenshot>

|

||||

<image>https://jeffser.com/images/alpaca/screenie4.png</image>

|

||||

<caption>A conversation involving a YouTube video transcript</caption>

|

||||

<caption>A Python script running inside integrated terminal</caption>

|

||||

</screenshot>

|

||||

<screenshot>

|

||||

<image>https://jeffser.com/images/alpaca/screenie5.png</image>

|

||||

<caption>A conversation involving a YouTube video transcript</caption>

|

||||

</screenshot>

|

||||

<screenshot>

|

||||

<image>https://jeffser.com/images/alpaca/screenie6.png</image>

|

||||

<caption>Multiple models being downloaded</caption>

|

||||

</screenshot>

|

||||

</screenshots>

|

||||

<content_rating type="oars-1.1">

|

||||

<content_attribute id="money-purchasing">mild</content_attribute>

|

||||

</content_rating>

|

||||

<content_rating type="oars-1.1" />

|

||||

<url type="bugtracker">https://github.com/Jeffser/Alpaca/issues</url>

|

||||

<url type="homepage">https://jeffser.com/alpaca/</url>

|

||||

<url type="donation">https://github.com/sponsors/Jeffser</url>

|

||||

@ -80,6 +82,255 @@

|

||||

<url type="contribute">https://github.com/Jeffser/Alpaca/discussions/154</url>

|

||||

<url type="vcs-browser">https://github.com/Jeffser/Alpaca</url>

|

||||

<releases>

|

||||

<release version="2.7.0" date="2024-10-15">

|

||||

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/2.7.0</url>

|

||||

<description>

|

||||

<p>New</p>

|

||||

<ul>

|

||||

<li>User messages are now compacted into bubbles</li>

|

||||

</ul>

|

||||

<p>Fixes</p>

|

||||

<ul>

|

||||

<li>Fixed re connection dialog not working when 'use local instance' is selected</li>

|

||||

<li>Fixed model manager not adapting to large system fonts</li>

|

||||

</ul>

|

||||

</description>

|

||||

</release>

|

||||

<release version="2.6.5" date="2024-10-13">

|

||||

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/2.6.5</url>

|

||||

<description>

|

||||

<p>New</p>

|

||||

<ul>

|

||||

<li>Details page for models</li>

|

||||

<li>Model selector gets replaced with 'manage models' button when there are no models downloaded</li>

|

||||

<li>Added warning when model is too big for the device</li>

|

||||

<li>Added AMD GPU indicator in preferences</li>

|

||||

</ul>

|

||||

</description>

|

||||

</release>

|

||||

<release version="2.6.0" date="2024-10-11">

|

||||

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/2.6.0</url>

|

||||

<description>

|

||||

<p>New</p>

|

||||

<ul>

|

||||

<li>Better system for handling dialogs</li>

|

||||

<li>Better system for handling instance switching</li>

|

||||

<li>Remote connection dialog</li>

|

||||

</ul>

|

||||

<p>Fixes</p>

|

||||

<ul>

|

||||

<li>Fixed: Models get duplicated when switching remote and local instance</li>

|

||||

<li>Better internal instance manager</li>

|

||||

</ul>

|

||||

</description>

|

||||

</release>

|

||||

<release version="2.5.1" date="2024-10-09">

|

||||

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/2.5.1</url>

|

||||

<description>

|

||||

<p>New</p>

|

||||

<ul>

|

||||

<li>Added 'Cancel' and 'Save' buttons when editing a message</li>

|

||||

</ul>

|

||||

<p>Fixes</p>

|

||||

<ul>

|

||||

<li>Better handling of image recognition</li>

|

||||

<li>Remove unused files when canceling a model download</li>

|

||||

<li>Better message blocks rendering</li>

|

||||

</ul>

|

||||

</description>

|

||||

</release>

|

||||

<release version="2.5.0" date="2024-10-06">

|

||||

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/2.5.0</url>

|

||||

<description>

|

||||

<p>New</p>

|

||||

<ul>

|

||||

<li>Run bash and python scripts straight from chat</li>

|

||||

<li>Updated Ollama to 0.3.12</li>

|

||||

<li>New models!</li>

|

||||

</ul>

|

||||

<p>Fixes</p>

|

||||

<ul>

|

||||

<li>Fixed and made faster the launch sequence</li>

|

||||

<li>Better detection of code blocks in messages</li>

|

||||

<li>Fixed app not loading in certain setups with Nvidia GPUs</li>

|

||||

</ul>

|

||||

</description>

|

||||

</release>

|

||||

<release version="2.0.6" date="2024-09-29">

|

||||

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/2.0.6</url>

|

||||

<description>

|

||||

<p>Fixes</p>

|

||||

<ul>

|

||||

<li>Fixed message notification sometimes crashing text rendering because of them running on different threads</li>

|

||||

</ul>

|

||||

</description>

|

||||

</release>

|

||||

<release version="2.0.5" date="2024-09-25">

|

||||

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/2.0.5</url>

|

||||

<description>

|

||||

<p>Fixes</p>

|

||||

<ul>

|

||||

<li>Fixed message generation sometimes failing</li>

|

||||

</ul>

|

||||

</description>

|

||||

</release>

|

||||

<release version="2.0.4" date="2024-09-22">

|

||||

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/2.0.4</url>

|

||||

<description>

|

||||

<p>New</p>

|

||||

<ul>

|

||||

<li>Sidebar resizes with the window</li>

|

||||

<li>New welcome dialog</li>

|

||||

<li>Message search</li>

|

||||

<li>Updated Ollama to v0.3.11</li>

|

||||

<li>A lot of new models provided by Ollama repository</li>

|

||||

</ul>

|

||||

<p>Fixes</p>

|

||||

<ul>

|

||||

<li>Fixed text inside model manager when the accessibility option 'large text' is on</li>

|

||||

<li>Fixed image recognition on unsupported models</li>

|

||||

</ul>

|

||||

</description>

|

||||

</release>

|

||||

<release version="2.0.3" date="2024-09-18">

|

||||

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/2.0.3</url>

|

||||

<description>

|

||||

<p>Fixes</p>

|

||||

<ul>

|

||||

<li>Fixed spinner not hiding if the back end fails</li>

|

||||

<li>Fixed image recognition with local images</li>

|

||||

<li>Changed appearance of delete / stop model buttons</li>

|

||||

<li>Fixed stop button crashing the app</li>

|

||||

</ul>

|

||||

<p>New</p>

|

||||

<ul>

|

||||

<li>Made sidebar resize a little when the window is smaller</li>

|

||||

<li>Instant launch</li>

|

||||

</ul>

|

||||

</description>

|

||||

</release>

|

||||

<release version="2.0.2" date="2024-09-11">

|

||||

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/2.0.2</url>

|

||||

<description>

|

||||

<p>Fixes</p>

|

||||

<ul>

|

||||

<li>Fixed error on first run (welcome dialog)</li>

|

||||

<li>Fixed checker for Ollama instance (used on system packages)</li>

|

||||

</ul>

|

||||

</description>

|

||||

</release>

|

||||

<release version="2.0.1" date="2024-09-11">

|

||||

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/2.0.1</url>

|

||||

<description>

|

||||

<p>Fixes</p>

|

||||

<ul>

|

||||

<li>Fixed 'clear chat' option</li>

|

||||

<li>Fixed welcome dialog causing the local instance to not launch</li>

|

||||

<li>Fixed support for AMD GPUs</li>

|

||||

</ul>

|

||||

</description>

|

||||

</release>

|

||||

<release version="2.0.0" date="2024-09-01">

|

||||

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/2.0.0</url>

|

||||

<description>

|

||||

<p>New</p>

|

||||

<ul>

|

||||

<li>Model, message and chat systems have been rewritten</li>

|

||||

<li>New models are available</li>

|

||||

<li>Ollama updated to v0.3.9</li>

|

||||

<li>Added support for multiple chat generations simultaneously</li>

|

||||

<li>Added experimental AMD GPU support</li>

|

||||

<li>Added message loading spinner and new message indicator to chat tab</li>

|

||||

<li>Added animations</li>

|

||||

<li>Changed model manager / model selector appearance</li>

|

||||

<li>Changed message appearance</li>

|

||||

<li>Added markdown and code blocks to user messages</li>

|

||||

<li>Added loading dialog at launch so the app opens faster</li>

|

||||

<li>Added warning when device is on 'battery saver' mode</li>

|

||||

<li>Added inactivity timer to integrated instance</li>

|

||||

</ul>

|

||||

<ul>

|

||||

<li>The chat is now scrolled to the bottom when it's changed</li>

|

||||

<li>Better handling of focus on messages</li>

|

||||

<li>Better general performance on the app</li>

|

||||

</ul>

|

||||

</description>

|

||||

</release>

|

||||

<release version="1.1.1" date="2024-08-12">

|

||||

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/1.1.1</url>

|

||||

<description>

|

||||

<p>New</p>

|

||||

<ul>

|

||||

<li>New duplicate chat option</li>

|

||||

<li>Changed model selector appearance</li>

|

||||

<li>Message entry is focused on launch and chat change</li>

|

||||

<li>Message is focused when it's being edited</li>

|

||||

<li>Added loading spinner when regenerating a message</li>

|

||||

<li>Added Ollama debugging to 'About Alpaca' dialog</li>

|

||||

<li>Changed YouTube transcription dialog appearance and behavior</li>

|

||||

</ul>

|

||||

<p>Fixes</p>

|

||||

<ul>

|

||||

<li>CTRL+W and CTRL+Q stops local instance before closing the app</li>

|

||||

<li>Changed appearance of 'Open Model Manager' button on welcome screen</li>

|

||||

<li>Fixed message generation not working consistently</li>

|

||||

<li>Fixed message edition not working consistently</li>

|

||||

</ul>

|

||||

</description>

|

||||

</release>

|

||||

<release version="1.1.0" date="2024-08-10">

|

||||

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/1.1.0</url>

|

||||

<description>

|

||||

<p>New</p>

|

||||

<ul>

|

||||

<li>Model manager opens faster</li>

|

||||

<li>Delete chat option in secondary menu</li>

|

||||

<li>New model selector popup</li>

|

||||

<li>Standard shortcuts</li>

|

||||

<li>Model manager is navigable with keyboard</li>

|

||||

<li>Changed sidebar collapsing behavior</li>

|

||||

<li>Focus indicators on messages</li>

|

||||

<li>Welcome screen</li>

|

||||

<li>Give message entry focus at launch</li>

|

||||

<li>Generally better code</li>

|

||||

</ul>

|

||||

<p>Fixes</p>

|

||||

<ul>

|

||||

<li>Better width for dialogs</li>

|

||||

<li>Better compatibility with screen readers</li>

|

||||

<li>Fixed message regenerator</li>

|

||||

<li>Removed 'Featured models' from welcome dialog</li>

|

||||

<li>Added default buttons to dialogs</li>

|

||||

<li>Fixed import / export of chats</li>

|

||||

<li>Changed Python2 title to Python on code blocks</li>

|

||||

<li>Prevent regeneration of title when the user changed it to a custom title</li>

|

||||

<li>Show date on stopped messages</li>

|

||||

<li>Fix clear chat error</li>

|

||||

</ul>

|

||||

</description>

|

||||

</release>

|

||||

<release version="1.0.6" date="2024-08-04">

|

||||

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/1.0.6</url>

|

||||

<description>

|

||||

<p>New</p>

|

||||

<ul>

|

||||

<li>Changed shortcuts to standards</li>

|

||||

<li>Moved 'Manage Models' button to primary menu</li>

|

||||

<li>Stable support for GGUF model files</li>

|

||||

<li>General optimizations</li>

|

||||

</ul>

|

||||

<p>Fixes</p>

|

||||

<ul>

|

||||

<li>Better handling of enter key (important for Japanese input)</li>

|

||||

<li>Removed sponsor dialog</li>

|

||||

<li>Added sponsor link in about dialog</li>

|

||||

<li>Changed window and elements dimensions</li>

|

||||

<li>Selected model changes when entering model manager</li>

|

||||

<li>Better image tooltips</li>

|

||||

<li>GGUF Support</li>

|

||||

</ul>

|

||||

</description>

|

||||

</release>

|

||||

<release version="1.0.5" date="2024-08-02">

|

||||

<url type="details">https://github.com/Jeffser/Alpaca/releases/tag/1.0.5</url>

|

||||

<description>

|

||||

|

||||

@ -1,5 +1,5 @@

|

||||

project('Alpaca', 'c',

|

||||

version: '1.0.5',

|

||||

version: '2.7.0',

|

||||

meson_version: '>= 0.62.0',

|

||||

default_options: [ 'warning_level=2', 'werror=false', ],

|

||||

)

|

||||

|

||||

@ -4,4 +4,10 @@ pt_BR

|

||||

fr

|

||||

nb_NO

|

||||

bn

|

||||

zh_CN

|

||||

zh_Hans

|

||||

hi

|

||||

tr

|

||||

uk

|

||||

de

|

||||

he

|

||||

te

|

||||

|

||||

@ -5,5 +5,11 @@ src/main.py

|

||||

src/window.py

|

||||

src/available_models_descriptions.py

|

||||

src/connection_handler.py

|

||||

src/dialogs.py

|

||||

src/window.ui

|

||||

src/generic_actions.py

|

||||

src/custom_widgets/chat_widget.py

|

||||

src/custom_widgets/message_widget.py

|

||||

src/custom_widgets/model_widget.py

|

||||

src/custom_widgets/table_widget.py

|

||||

src/custom_widgets/dialog_widget.py

|

||||

src/custom_widgets/terminal_widget.py

|

||||

2586

po/alpaca.pot

2586

po/alpaca.pot

File diff suppressed because it is too large

Load Diff

File diff suppressed because it is too large

Load Diff

2741

po/nb_NO.po

2741

po/nb_NO.po

File diff suppressed because it is too large

Load Diff

2911

po/pt_BR.po

2911

po/pt_BR.po

File diff suppressed because it is too large

Load Diff

3083

po/zh_Hans.po

Normal file

3083

po/zh_Hans.po

Normal file

File diff suppressed because it is too large

Load Diff

93

snap/snapcraft.yaml

Normal file

93

snap/snapcraft.yaml

Normal file

@ -0,0 +1,93 @@

|

||||

name: jeffser-alpaca

|

||||

base: core24

|

||||

adopt-info: alpaca

|

||||

|

||||

platforms:

|

||||

amd64:

|

||||

arm64:

|

||||

|

||||

confinement: strict

|

||||

grade: stable

|

||||

compression: lzo

|

||||

|

||||

slots:

|

||||

dbus-alpaca:

|

||||

interface: dbus

|

||||

bus: session

|

||||

name: com.jeffser.Alpaca

|

||||

|

||||

apps:

|

||||

alpaca:

|

||||

command: usr/bin/alpaca

|

||||

common-id: com.jeffser.Alpaca

|

||||

extensions:

|

||||

- gnome

|

||||

plugs:

|

||||

- network

|

||||

- network-bind

|

||||

- home

|

||||

- removable-media

|

||||

|

||||

ollama:

|

||||

command: bin/ollama

|

||||

plugs:

|

||||

- home

|

||||

- removable-media

|

||||

- network

|

||||

- network-bind

|

||||

|

||||

ollama-daemon:

|

||||

command: bin/ollama serve

|

||||

daemon: simple

|

||||

install-mode: enable

|

||||

restart-condition: on-failure

|

||||

plugs:

|

||||

- home

|

||||

- removable-media

|

||||

- network

|

||||

- network-bind

|

||||

|

||||

parts:

|

||||

# Python dependencies

|

||||

python-deps:

|

||||

plugin: python

|

||||

source: .

|

||||

python-packages:

|

||||

- requests==2.31.0

|

||||

- pillow==10.3.0

|

||||

- pypdf==4.2.0

|

||||

- pytube==15.0.0

|

||||

- html2text==2024.2.26

|

||||

|

||||

# Ollama plugin

|

||||

ollama:

|

||||

plugin: dump

|

||||

source:

|

||||

- on amd64: https://github.com/ollama/ollama/releases/download/v0.3.12/ollama-linux-amd64.tgz

|

||||

- on arm64: https://github.com/ollama/ollama/releases/download/v0.3.12/ollama-linux-arm64.tgz

|

||||

|

||||

# Alpaca app

|

||||

alpaca:

|

||||

plugin: meson

|

||||

source-type: git

|

||||

source: https://github.com/Jeffser/Alpaca.git

|

||||

source-tag: 2.6.5

|

||||

source-depth: 1

|

||||

meson-parameters:

|

||||

- --prefix=/snap/alpaca/current/usr

|

||||

override-build: |

|

||||

craftctl default

|

||||

sed -i '1c#!/usr/bin/env python3' $CRAFT_PART_INSTALL/snap/alpaca/current/usr/bin/alpaca

|

||||

parse-info:

|

||||

- usr/share/metainfo/com.jeffser.Alpaca.metainfo.xml

|

||||

organize:

|

||||

snap/alpaca/current: .

|

||||

after: [python-deps]

|

||||

|

||||

deps:

|

||||

plugin: nil

|

||||

after: [alpaca]

|

||||

stage-packages:

|

||||

- libnuma1

|

||||

prime:

|

||||

- usr/lib/*/libnuma.so.1*

|

||||

@ -29,6 +29,11 @@

|

||||

<file alias="icons/scalable/status/edit-symbolic.svg">icons/edit-symbolic.svg</file>

|

||||

<file alias="icons/scalable/status/image-missing-symbolic.svg">icons/image-missing-symbolic.svg</file>

|

||||

<file alias="icons/scalable/status/update-symbolic.svg">icons/update-symbolic.svg</file>

|

||||

<file alias="icons/scalable/status/down-symbolic.svg">icons/down-symbolic.svg</file>

|

||||

<file alias="icons/scalable/status/chat-bubble-text-symbolic.svg">icons/chat-bubble-text-symbolic.svg</file>

|

||||

<file alias="icons/scalable/status/execute-from-symbolic.svg">icons/execute-from-symbolic.svg</file>

|

||||

<file alias="icons/scalable/status/cross-large-symbolic.svg">icons/cross-large-symbolic.svg</file>

|

||||

<file alias="icons/scalable/status/info-outline-symbolic.svg">icons/info-outline-symbolic.svg</file>

|

||||

<file preprocess="xml-stripblanks">window.ui</file>

|

||||

<file preprocess="xml-stripblanks">gtk/help-overlay.ui</file>

|

||||

</gresource>

|

||||

|

||||

File diff suppressed because it is too large

Load Diff

@ -1,11 +1,13 @@

|

||||

descriptions = {

|

||||

'llama3.2': _("Meta's Llama 3.2 goes small with 1B and 3B models."),

|

||||

'llama3.1': _("Llama 3.1 is a new state-of-the-art model from Meta available in 8B, 70B and 405B parameter sizes."),

|

||||

'gemma2': _("Google Gemma 2 is now available in 2 sizes, 9B and 27B."),

|

||||

'gemma2': _("Google Gemma 2 is a high-performing and efficient model available in three sizes: 2B, 9B, and 27B."),

|

||||

'qwen2.5': _("Qwen2.5 models are pretrained on Alibaba's latest large-scale dataset, encompassing up to 18 trillion tokens. The model supports up to 128K tokens and has multilingual support."),

|

||||

'phi3.5': _("A lightweight AI model with 3.8 billion parameters with performance overtaking similarly and larger sized models."),

|

||||

'nemotron-mini': _("A commercial-friendly small language model by NVIDIA optimized for roleplay, RAG QA, and function calling."),

|

||||

'mistral-small': _("Mistral Small is a lightweight model designed for cost-effective use in tasks like translation and summarization."),

|

||||

'mistral-nemo': _("A state-of-the-art 12B model with 128k context length, built by Mistral AI in collaboration with NVIDIA."),

|

||||

'mistral-large': _("Mistral Large 2 is Mistral's new flagship model that is significantly more capable in code generation, mathematics, and reasoning with 128k context window and support for dozens of languages."),

|

||||

'qwen2': _("Qwen2 is a new series of large language models from Alibaba group"),

|

||||

'deepseek-coder-v2': _("An open-source Mixture-of-Experts code language model that achieves performance comparable to GPT4-Turbo in code-specific tasks."),

|

||||

'phi3': _("Phi-3 is a family of lightweight 3B (Mini) and 14B (Medium) state-of-the-art open models by Microsoft."),

|

||||

'mistral': _("The 7B model released by Mistral AI, updated to version 0.3."),

|

||||

'mixtral': _("A set of Mixture of Experts (MoE) model with open weights by Mistral AI in 8x7b and 8x22b parameter sizes."),

|

||||

'codegemma': _("CodeGemma is a collection of powerful, lightweight models that can perform a variety of coding tasks like fill-in-the-middle code completion, code generation, natural language understanding, mathematical reasoning, and instruction following."),

|

||||

@ -15,91 +17,108 @@ descriptions = {

|

||||

'llama3': _("Meta Llama 3: The most capable openly available LLM to date"),

|

||||

'gemma': _("Gemma is a family of lightweight, state-of-the-art open models built by Google DeepMind. Updated to version 1.1"),

|

||||

'qwen': _("Qwen 1.5 is a series of large language models by Alibaba Cloud spanning from 0.5B to 110B parameters"),

|

||||

'qwen2': _("Qwen2 is a new series of large language models from Alibaba group"),

|

||||

'phi3': _("Phi-3 is a family of lightweight 3B (Mini) and 14B (Medium) state-of-the-art open models by Microsoft."),

|

||||

'llama2': _("Llama 2 is a collection of foundation language models ranging from 7B to 70B parameters."),

|

||||

'codellama': _("A large language model that can use text prompts to generate and discuss code."),

|

||||

'dolphin-mixtral': _("Uncensored, 8x7b and 8x22b fine-tuned models based on the Mixtral mixture of experts models that excels at coding tasks. Created by Eric Hartford."),

|

||||

'nomic-embed-text': _("A high-performing open embedding model with a large token context window."),

|

||||

'llama2-uncensored': _("Uncensored Llama 2 model by George Sung and Jarrad Hope."),

|

||||

'mxbai-embed-large': _("State-of-the-art large embedding model from mixedbread.ai"),

|

||||

'dolphin-mixtral': _("Uncensored, 8x7b and 8x22b fine-tuned models based on the Mixtral mixture of experts models that excels at coding tasks. Created by Eric Hartford."),

|

||||

'phi': _("Phi-2: a 2.7B language model by Microsoft Research that demonstrates outstanding reasoning and language understanding capabilities."),

|

||||

'deepseek-coder': _("DeepSeek Coder is a capable coding model trained on two trillion code and natural language tokens."),

|

||||

'dolphin-mistral': _("The uncensored Dolphin model based on Mistral that excels at coding tasks. Updated to version 2.8."),

|

||||

'orca-mini': _("A general-purpose model ranging from 3 billion parameters to 70 billion, suitable for entry-level hardware."),

|

||||

'dolphin-llama3': _("Dolphin 2.9 is a new model with 8B and 70B sizes by Eric Hartford based on Llama 3 that has a variety of instruction, conversational, and coding skills."),

|

||||

'mxbai-embed-large': _("State-of-the-art large embedding model from mixedbread.ai"),

|

||||

'starcoder2': _("StarCoder2 is the next generation of transparently trained open code LLMs that comes in three sizes: 3B, 7B and 15B parameters."),

|

||||

'mistral-openorca': _("Mistral OpenOrca is a 7 billion parameter model, fine-tuned on top of the Mistral 7B model using the OpenOrca dataset."),

|

||||

'yi': _("Yi 1.5 is a high-performing, bilingual language model."),

|

||||

'llama2-uncensored': _("Uncensored Llama 2 model by George Sung and Jarrad Hope."),